10 Tricks to Slash Your AI API Bill by 80%

Most teams overpay for AI by 3-5x. Here are 10 proven strategies to reduce AI API costs — from smart model routing to prompt caching — with real pricing math and code examples.

Most teams overpay for AI by 3-5x. Here are 10 proven strategies to reduce AI API costs — from smart model routing to prompt caching — with real pricing math and code examples.

What if you're spending 4x more on AI API calls than you actually need to?

That's not an exaggeration. Most teams treat API pricing as a fixed cost — pick a model, send requests, pay the bill. But the gap between a naive implementation and an optimized one is staggering. You can reduce AI API costs by 50-80% without sacrificing output quality, and the strategies aren't complicated.

The fastest way to cut your AI API bill is to route simple tasks to cheaper models, cache repeated requests, and trim your prompts. These three moves alone can eliminate half your spending. This guide covers those and seven more optimization strategies, ordered from quick wins to deeper engineering work.

This is the single biggest cost lever you have. Think of it like staffing — you don't assign a principal engineer to rename a variable.

As of April 5, 2026, the pricing spread across top-tier models is dramatic:

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| Mistral Large 3 | $0.50 | $1.50 |

| Gemini 2.5 Pro | $1.25 | $10.00 |

| GPT-4o | $2.50 | $10.00 |

| Claude Sonnet 4.6 | $3.00 | $15.00 |

| Claude Opus 4.6 | $5.00 | $25.00 |

Output tokens on Claude Opus 4.6 cost over 16x what Mistral Large 3 charges. And for simple tasks like text classification, summarization, or data extraction, the cheaper models handle the job just fine.

The cheapest API call is the one you route to the right model. Use Opus for hard reasoning. Use Mistral for everything else.

How to implement: Build a classifier that sorts incoming requests by difficulty. Simple tasks (classification, extraction, reformatting) go to budget models. Complex tasks (multi-step reasoning, code generation, detailed analysis) go to premium models.

def route_request(task_type: str, complexity: str) -> str:

if complexity == "simple":

return "mistral-large-latest"

elif complexity == "medium":

return "claude-sonnet-4-6"

else:

return "claude-opus-4-6"

For a workload that's 70% simple tasks, routing alone can cut your bill in half.

API caching is your second-biggest win — and one of the simplest ways to reduce AI API costs. Two flavors matter here:

Response caching: If the same prompt produces the same output (temperature=0), cache it. A Redis layer in front of your API calls can eliminate 30-60% of requests in most applications. And the implementation is dead simple — hash the prompt, check the cache, return or call.

Prompt caching: Both Anthropic and OpenAI now offer built-in prompt caching. If your requests share a long system prompt or common context prefix, cached tokens cost up to 90% less than fresh tokens.

For Anthropic's API specifically, cached input tokens cost just 10% of the regular price. So if your system prompt is 4,000 tokens and you're making 10,000 requests per day, you're saving on 40 million tokens of redundant processing daily.

# Anthropic prompt caching example

response = client.messages.create(

model="claude-sonnet-4-6",

system=[{

"type": "text",

"text": long_system_prompt,

"cache_control": {"type": "ephemeral"}

}],

messages=[{"role": "user", "content": user_query}]

)

Every token costs money. A bloated prompt with redundant instructions, excessive examples, or unnecessary context is literally burning cash with each API call.

Here's what actually moves the needle:

A prompt audit across your codebase typically cuts token usage by 20-40%. So it's worth the afternoon.

As of April 5, 2026, both OpenAI and Anthropic offer batch APIs with meaningful discounts. OpenAI's Batch API offers up to a 50% discount in exchange for accepting up to 24-hour turnaround on results.

If your use case isn't latency-sensitive — think nightly data processing, bulk classification, content generation pipelines — batch processing is free money sitting on the table.

Batch APIs are the easiest optimization to implement and somehow the one teams forget about most often. Check if your workload qualifies.

The implementation is usually just swapping your real-time endpoint for the batch endpoint and handling the async response pattern. Most SDKs make this a one-line change.

Regular caching only catches exact duplicates. But users ask the same question in different ways all the time. "What's the weather in NYC?" and "NYC weather today" should return the same cached answer.

Semantic caching uses embeddings to match similar queries. When a new request arrives, you compute its embedding, compare it against cached embeddings using cosine similarity, and return a cached response if the score exceeds your threshold.

Embedding calls are cheap — typically under $0.10 per million tokens — so the lookup cost is negligible compared to a full LLM call. Libraries like GPTCache and LangChain have built-in support for this pattern.

Start with a high similarity threshold (0.95+) and lower it gradually while monitoring output quality. Too aggressive and you'll serve wrong answers. Too conservative and you won't cache anything useful.

Interesting wrinkle: long context is expensive context. If you're stuffing entire documents into every prompt, you're paying for mountains of irrelevant tokens.

Better approaches:

The math is straightforward. If your average prompt shrinks from 8,000 tokens to 2,000 tokens, you just cut input costs by 75%. And for output-heavy models like Claude Opus 4.6 where output tokens cost $25 per million, trimming the context that triggers verbose responses saves even more.

Streaming responses lets you evaluate output as it arrives. If the model goes off-track in the first 50 tokens, you can abort the request early and save everything after that.

This works especially well for:

But don't overdo it — aggressive early stopping can hurt quality. Start conservative and tune your cutoff thresholds based on actual error rates.

Here's a counterintuitive move: spend money upfront to save money forever.

Fine-tuning a smaller model on your specific task can match or exceed the performance of a larger general-purpose model — at a fraction of the inference cost. A fine-tuned GPT-4o-mini handling your particular classification task might outperform generic Claude Opus 4.6, and it costs dramatically less per call.

The ROI math works like this: if fine-tuning costs $500 and saves you $200 per month on API calls, you break even in 2.5 months. Everything after that's pure savings.

This strategy works best when you have:

As of April 5, 2026, open-source models like Llama 4 Maverick and DeepSeek V3 are genuinely competitive with commercial APIs for many tasks. Self-hosting eliminates per-token costs entirely — you just pay for compute.

But here's the catch (and it's a big one): self-hosting only pencils out at scale. You need enough request volume to justify the infrastructure spend. For most teams processing fewer than 50,000-100,000 requests per day, managed APIs are actually cheaper when you account for DevOps overhead, GPU rental, monitoring, and maintenance time.

Self-hosting is like buying versus renting. Cheaper over the long haul at high volume, but the upfront commitment and ongoing maintenance will crush you if you're not ready for it.

Run the numbers for your specific workload before committing. Tools like LiteLLM make it easy to proxy between self-hosted and API-based models, so you can A/B test both paths with real traffic.

You can't optimize what you don't measure. Set up tracking for:

Set hard budget limits per API key. Both OpenAI and Anthropic support usage limits in their dashboards. And implement spending alerts — you want to know when usage spikes before the invoice arrives, not after.

# Simple cost tracking wrapper

def tracked_api_call(model, messages, **kwargs):

response = client.chat.completions.create(

model=model, messages=messages, **kwargs

)

tokens_in = response.usage.prompt_tokens

tokens_out = response.usage.completion_tokens

cost = calculate_cost(model, tokens_in, tokens_out)

log_cost(model=model, cost=cost, tokens_in=tokens_in, tokens_out=tokens_out)

return response

Even a basic cost logger like this gives you the visibility to find your biggest optimization opportunities.

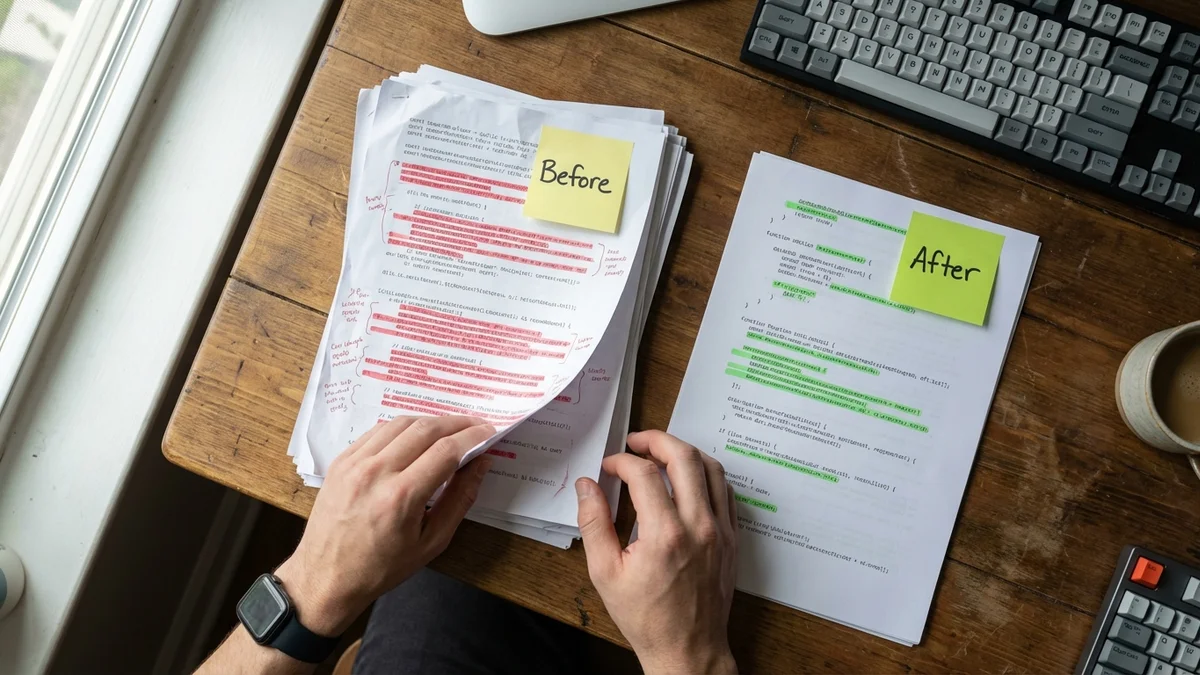

Before and after each change:

If you're serious about reducing AI API costs, start with the three strategies that require the least engineering effort: model routing (Strategy 1), response caching (Strategy 2), and prompt trimming (Strategy 3). These alone can typically reduce your API bill by 50-70%.

Then layer on batch processing, semantic caching, and monitoring as your system matures. Save fine-tuning and self-hosting for when you've exhausted the easy wins and have clear data on your most expensive workloads.

The AI API pricing war is driving costs down every quarter. But that's no reason to leave money on the table today.

Sources

Yes, and you should. Multi-provider routing with tools like LiteLLM or OpenRouter lets you send requests to different providers based on cost, latency, or task type from a single interface. This also gives you automatic failover protection — if one provider experiences downtime, your requests route to an alternative without any code changes.

Based on historical trends from 2023 to 2026, AI API pricing has dropped roughly 5-10x per capability tier each year. GPT-4-class intelligence that cost $60 per million output tokens in early 2023 now costs well under $10 per million on several providers. Competition among OpenAI, Anthropic, Google, Mistral, and open-source alternatives is accelerating this trend.

It depends entirely on your request volume. At roughly 50,000 or more requests per day with a dedicated GPU instance costing $2-4 per hour, self-hosting typically breaks even or saves money. Below that threshold, managed API pricing is usually cheaper once you factor in engineering time for setup, monitoring, model updates, and infrastructure management.

Yes. Anthropic, OpenAI, and Google all offer enterprise pricing tiers with volume-based discounts, typically requiring annual spend commitments. These discounts can range from 10-40% off published rates depending on your volume. Contact their sales teams directly — the public pricing page is a starting point, not a ceiling.

Measure your average tokens per request (input and output separately) during development, multiply by your projected daily request volume, then multiply by the per-token rate for your chosen model. Add a 20-30% buffer since usage almost always grows as teams discover new use cases. Most providers offer free-tier credits or usage calculators to help estimate costs before committing.