Claude Code Review 2026: Worth $25/MTok or Overrated?

An honest review of Anthropic's terminal coding agent in 2026. The pricing math, the SWE-bench numbers, and where Claude Code wins or burns your token budget.

An honest review of Anthropic's terminal coding agent in 2026. The pricing math, the SWE-bench numbers, and where Claude Code wins or burns your token budget.

Anthropic's terminal coding agent has become the default recommendation for senior engineers in 2026. But the marketing hype is loud, and the pricing isn't free. So does Claude Code actually deserve its 9.4/10 reputation, or is this just another AI tool that wins benchmarks and loses real workflows?

This Claude Code review digs into the pricing math, the SWE-bench numbers, the real bottlenecks, and the moments where the CLI genuinely saves hours versus the moments it burns tokens for nothing.

Pay attention here — Rating: 9.4/10

One-line verdict: The most capable autonomous coding agent available right now, with pricing that punishes careless prompting.

Best for: Senior engineers, repo-wide refactors, multi-file feature work, and anyone already paying for a Claude Max plan.

Not for: Beginners learning to code, hobbyists with tight budgets, or teams without code review discipline.

If you only read one section of this Claude Code review, read this box. The rest is the math behind the rating.

Claude Code is Anthropic's official CLI agent that runs Claude Opus 4.6 (or Sonnet 4.6) directly in your terminal. You point it at a directory, describe what you want, and it reads files, edits them, runs tests, and iterates until the task is done. No IDE plugin required. (For a step-by-step setup walkthrough, see our Claude Code tutorial.)

The tool went generally available in May 2025 alongside Claude 4 and has been on a tear since. According to Anthropic's official documentation, it now ships with native subagents, hooks, MCP support, and a permissions system that lets you whitelist specific commands. It's one of three flagship terminal agents in 2026, alongside Gemini CLI and Aider.

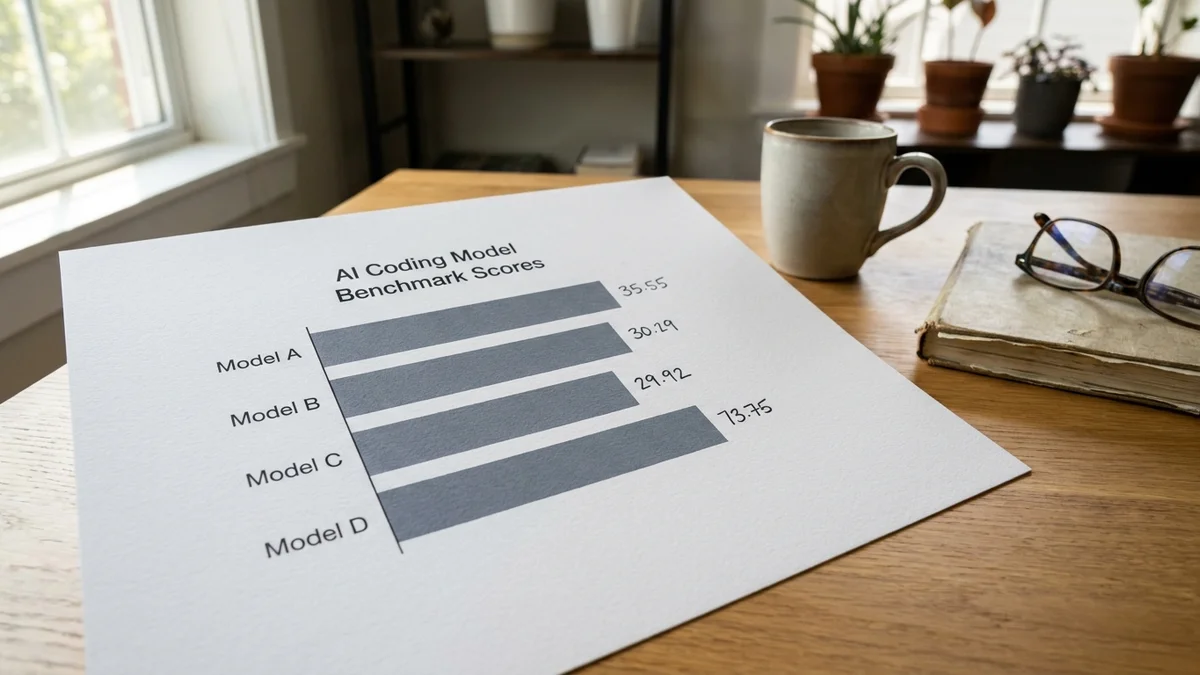

And the model behind it is the same Opus 4.6 that Anthropic reports at 81.4% on SWE-bench Verified (averaged over 25 trials with a prompt modification). Sonnet 4.6 sits close behind at 80.2% on the same benchmark, while OpenAI's self-reported o3 figure on SWE-bench Verified is 69.1%. We pit the two head-to-head in our Claude Opus 4.6 vs GPT-5 benchmark roundup if you want the full breakdown.

So this isn't just another wrapper around a chat model. It's the wrapper Anthropic built for the model that's currently winning the coding benchmarks.

Claude Code packs a lot into a CLI binary. The features below are the ones that actually change how you work, not just marketing checkboxes.

Most AI coding tools dump suggestions into your editor and call it a day. Claude Code actually does the work. It reads files with grep, edits with patches, runs your test suite, and reads the output. Then it tries again if something fails.

This is the feature that makes the price tag tolerable. A 30-minute refactor that touches 12 files becomes a 4-minute prompt with one human review pass. The loop is tight enough that you can sit there and watch it work, or you can walk away and check back when it pings.

You can spawn multiple Claude instances in parallel. One agent runs the test suite while another writes documentation. A third can be researching a library API in the background. According to Anthropic's agent architecture docs, subagents inherit context but operate in isolated conversations, which keeps token usage predictable.

This is genuinely new in CLI tools. Aider doesn't have it. Cursor's agent mode doesn't really have it. So if you're juggling parallel workstreams, this alone is a reason to pay attention.

Model Context Protocol support means Claude Code can talk to your Postgres database, your Linear board, your Slack workspace, or any other MCP server. The protocol launched in late 2024 and has matured fast. By early 2026, there are over 200 community MCP servers in the public registry.

What this means in practice: you can ask Claude Code to query your production database, find the slowest queries, write an index migration, and open a PR. All in one prompt. That's the kind of workflow that justifies the price tag.

You configure Claude Code via settings.json, which lets you set up pre-commit hooks, allowlist specific bash commands, and block destructive operations by default. This matters because the agent will absolutely try to run git push --force if you let it.

The permission model is opt-in for sensitive operations. So you can run Claude Code in autonomous mode on a feature branch and trust it not to nuke your main.

The 2025 update added a planning mode that breaks tasks into steps before execution. You can review the plan, edit it, and then approve. It's a small UX touch that prevents a lot of wasted token spend.

Use this on any task that costs more than $0.50 to run. The 30 seconds you spend reviewing the plan saves you 5 minutes of cleanup when the agent goes off in a wrong direction.

Claude Code reads CLAUDE.md files at every level of your repo, which act as persistent instructions. Drop coding standards, file structure notes, or testing conventions into one of these files and the agent will follow them across sessions.

This is the closest thing to teaching the agent your codebase. And because the file is version-controlled, the whole team benefits from the same instructions.

You can configure when to use Opus 4.6 versus Sonnet 4.6. Cheaper models for simple lookups, the heavy hitter for architecture decisions. Smart users save 60-70% on their bill this way by routing the bulk of their reads through Sonnet.

Benchmark numbers tell part of the story. SWE-bench Verified, which tests against real GitHub issues from popular Python projects, puts Anthropic's reported Claude Opus 4.6 score at 81.4% (with a prompt modification, averaged over 25 trials). That's roughly a point above Anthropic's reported Sonnet 4.6 score of 80.2%, and well ahead of OpenAI's self-reported o3 score of 69.1%.

But benchmarks aren't user experience. Based on the Anthropic engineering blog and aggregated community reports across 2026, the agent works well in some scenarios and struggles in others.

Where Claude Code wins:

Where it struggles:

CLAUDE.md file structure guideThere's also a quieter problem: the agent can spiral. If your first prompt is vague, it might run for 15 minutes, burn through 200K input tokens, and produce a half-broken PR. You learn fast to write better specs.

And this is where the gap between novice users and senior engineers shows. Senior devs know how to scope a task. Junior users hand the agent open-ended problems and end up with bills they can't justify.

Claude Code pricing is where the conversation gets uncomfortable. As of early 2026, you have three options:

| Plan | Price | What You Get |

|---|---|---|

| Pay-as-you-go API | $5/M input, $25/M output (Opus) | Direct API billing, no caps |

| Claude Pro | $20/month | Limited Claude Code with daily usage caps |

| Claude Max | $100-200/month | Higher limits, priority access |

The API math is what most teams care about. A typical 30-minute coding session on a real codebase consumes roughly 80K-150K input tokens and 15K-30K output tokens. So one session costs somewhere between $0.80 and $1.50 with Opus.

Run that 4 times a day across a 20-person engineering team and you're looking at $400-1,200 per developer per month. Yes, really.

But, and this is important, the alternative is paying that engineer's hourly rate to do the same work in 4x the time. The economics work if your engineers are senior and your tasks are non-trivial. They don't work if you're using Claude Code to generate React buttons.

According to Anthropic's pricing page, Sonnet 4.6 runs at $3/M input and $15/M output, which cuts costs by roughly 70% for tasks where you don't need Opus-level reasoning. The smartest workflow is Sonnet for reads and exploration, Opus for actual code generation.

If your engineering team isn't already disciplined about scoping work, Claude Code will surface that problem in your AWS bill before it surfaces in your sprint retro.

Pros:

CLAUDE.md files give you portable, version-controlled agent instructionsCons:

CLAUDE.mdYou should use Claude Code if you're a senior engineer working on real production codebases with decent test coverage. You should use it if your team can articulate clear specs and review PRs carefully. You should use it if you've already tried Cursor and Aider and found yourself wanting more autonomy.

You should also use it if your engineering hours cost more than $100/hour to your business. The tool pays for itself fast at that level. A single afternoon's productivity gain pays a month of token spend.

And you should use it if you work on infra, DevOps, or backend systems where the agentic loop maps cleanly to running scripts and reading output.

Skip Claude Code if you're learning to code. The agent will write working code faster than you can read it, and you'll learn nothing. Use Cursor or GitHub Copilot instead, where the suggestions are smaller and more educational.

Skip it if your codebase has zero tests. Without a feedback signal, the agent flies blind, and you'll spend more time debugging its output than you saved.

Skip it if you can't justify $100+/month per developer in tooling. Aider with DeepSeek V3 gets you maybe 70% of the capability at a fraction of the cost, and it's open source. You can also run Claude Code itself against local LLMs if you want the same UX without the token bill. Not as polished, but free and good enough for solo work.

| Tool | Strength | Weakness | Price |

|---|---|---|---|

| Claude Code | Agentic coding quality | Cost | $5/$25 per MTok (Opus) |

| Cursor | IDE integration | Less autonomous | $20/month |

| Aider | Open source, model-agnostic | Less polish | Free + model costs |

| Gemini CLI | Free tier, 2M context | Lower coding scores | Free |

| GitHub Copilot | Inline speed | Not agentic | $10-39/month |

Cursor wins for tight IDE feedback loops and pixel-perfect UI work. Aider wins on price and flexibility, and the open-source Goose CLI is increasingly cited as a Claude Code alternative for cost-sensitive teams. Gemini CLI wins for massive-context tasks like reading entire monolithic codebases at once.

Claude Code wins on the metric that matters most for senior engineers: how much real, mergeable work it produces per hour of human time. And on SWE-bench Verified, Anthropic's reported Opus 4.6 score sits at the top of the major commercial CLIs we track, even if Sonnet 4.6 is now within a point of it.

Claude Code is the best autonomous coding agent in 2026, and it's not particularly close. The 9.4/10 rating from the AI Bytes tools database is earned, not hyped. SWE-bench Verified leadership, the strongest agentic loop in any commercial CLI, and an MCP ecosystem that keeps growing add up to a genuinely useful tool.

But Claude Code is also expensive, and it punishes lazy use. So treat it like a senior contractor. Give it clear specs, review its output, and don't let it run unsupervised on production paths.

If you're a senior engineer with budget authority and a decent codebase, this is a no-brainer. If you're a student or hobbyist, look elsewhere. The price is the gatekeeper, and the gatekeeper is doing its job.

Final Rating: 9.4/10

Sources

The best autonomous coding agent available in 2026, but priced for senior engineers and serious codebases. If you can scope tasks tightly and review PRs carefully, it pays for itself. If not, Aider or Cursor are smarter choices.

No. Claude Code requires an API connection to Anthropic's servers and cannot run against local models like Llama 4 or DeepSeek V3. If you need an offline or self-hosted agent, look at Aider with Ollama or Continue.dev. Anthropic has not announced any local-model support and is unlikely to ship one given their hosted business model.

By default, Claude Code asks for permission before running git operations, file deletions, or shell commands not on your allowlist. You can configure this in settings.json with deny rules for commands like git push --force or rm -rf. If something does slip through, your safety net is git: commit often, work on feature branches, and never run Claude Code on a dirty main branch.

Performance degrades on monorepos without a well-structured CLAUDE.md file at the root. The agent uses grep and file-targeted reads rather than loading everything, so it scales decently if you guide it. For repos over 500K lines, expect to write CLAUDE.md files at the directory level pointing the agent at relevant subsystems. Token costs also climb fast on large repos, so use Sonnet for exploration and Opus only for final edits.

For consistent daily users running 3+ hours of Claude Code per day, the $200/month Max plan typically beats API pricing. For occasional users running a few sessions per week, pay-as-you-go is cheaper. Anthropic's Max plan also includes priority access during peak hours, which matters in late 2026 as Claude Code adoption has caused noticeable queue times on the standard tier.

Yes, they don't conflict. Many developers use Cursor or Copilot for inline autocomplete in the IDE and switch to Claude Code in a terminal for larger agentic tasks. The workflows complement each other well: Copilot for line-level speed, Claude Code for repo-wide refactors. The only real cost is your tooling budget.