Claude Opus 4.6 vs GPT-5: 8 Tests, 2 Winners

Claude Opus 4.6 leads in coding and general knowledge while OpenAI's o3 dominates math benchmarks. Eight tests, two different winners, and a clear takeaway for developers.

Claude Opus 4.6 leads in coding and general knowledge while OpenAI's o3 dominates math benchmarks. Eight tests, two different winners, and a clear takeaway for developers.

Claude Opus 4.6 scores 75.6% on SWE-bench Verified (bash, LM-only), among the highest publicly reported results. That number alone tells you something important about where the AI model race stands right now. OpenAI's models dominate mathematical reasoning. Anthropic's flagship crushes real-world coding tasks. And the winner between them depends entirely on what you're building.

If you need the short answer: Claude Opus 4.6 is the better choice for coding, software engineering, and general knowledge. OpenAI's o3 wins for math, formal reasoning, and abstract problem-solving. GPT-4o wins on price.

The Claude Opus 4.6 vs GPT-5 debate captures how much the AI market has shifted in two years. Both Anthropic and OpenAI have released multiple flagship models, prices have dropped significantly, and benchmarks that once seemed purely academic now directly predict real-world task performance. This guide breaks down eight major benchmarks to help you make the right call.

Claude Opus 4.6 is one of the strongest general-purpose AI models available in early 2026 for most developer and knowledge-worker tasks. It posts strong scores on MMLU (general knowledge at 92.3%, per benchmark aggregators), HumanEval (code generation at 93.7%), and SWE-bench Verified (real-world software engineering at 75.6%). OpenAI's advantage comes from specialization: the o3 reasoning model beats everything on math and abstract reasoning, while GPT-4o offers solid performance at roughly half the price.

For coding and software engineering work, pick Claude Opus 4.6. For math-heavy workloads, pick o3. For budget-sensitive projects, GPT-4o delivers strong results per dollar.

Benchmark data below comes from Papers with Code, SWE-bench, Chatbot Arena, and ARC Prize. This comparison pits Claude Opus 4.6 against OpenAI's strongest publicly benchmarked models: GPT-4o for general tasks and o3 for reasoning.

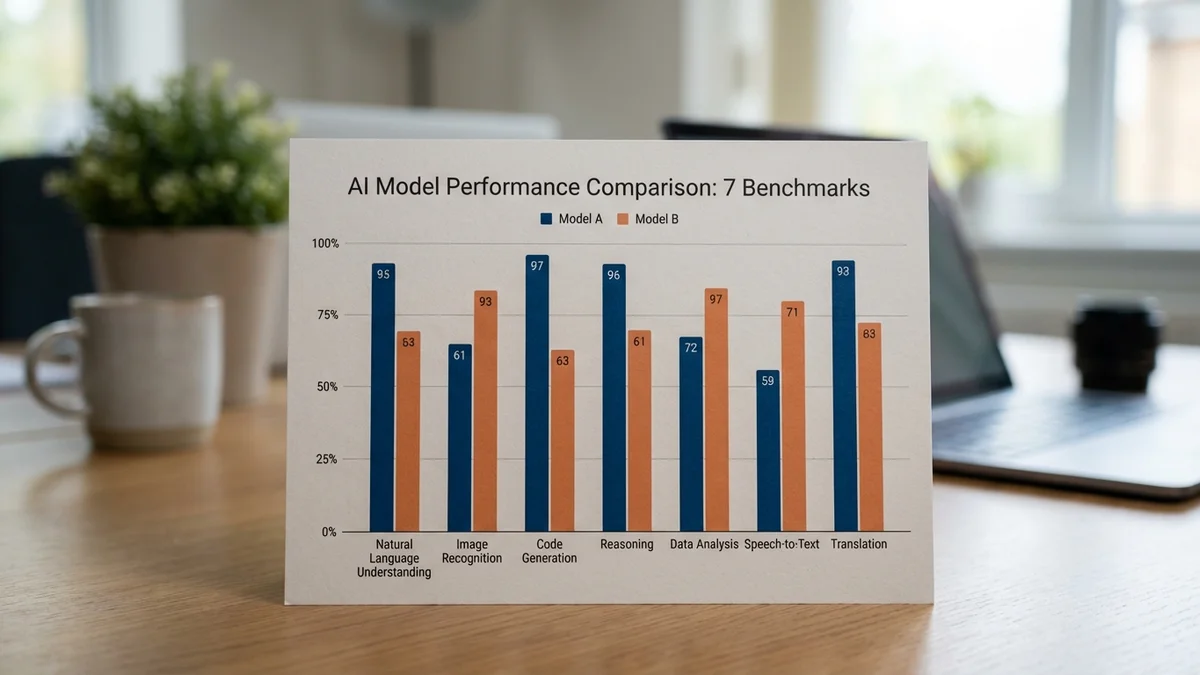

| Benchmark | Claude Opus 4.6 | GPT-4o | o3 | Winner |

|---|---|---|---|---|

| MMLU | 92.3% | 88.7% | N/A | Claude Opus 4.6 |

| HumanEval | 93.7% | 90.2% | N/A | Claude Opus 4.6 |

| GPQA Diamond | 91.3% | N/A | 87.7% | Claude Opus 4.6 |

| SWE-bench Verified | 75.6% | N/A | 58.4% | Claude Opus 4.6 |

| Chatbot Arena Elo | 1,496 | N/A | N/A | Claude Opus 4.6 |

| MATH | 85.1% | N/A | 96.7% | o3 |

| GSM8K | 97.8% | 95.8% | 99.2% | o3 |

| ARC-AGI | 53.0% | N/A | 87.5% | o3 |

Claude Opus 4.6 takes five of eight benchmarks. OpenAI's o3 wins the three math and reasoning tests (MATH, GSM8K, and ARC-AGI). Benchmark scores are sourced from official leaderboards and third-party aggregators; Chatbot Arena Elo scores shift frequently as new models enter.

MMLU tests broad knowledge across 57 academic subjects (everything from grade school math to professional law and medicine). Claude Opus 4.6 leads the entire field with 92.3%, beating GPT-4o's 88.7% by 3.6 points. DeepSeek R1 also scores 90.8%, and Claude Sonnet 4.6 manages 89.5%. Opus sits at the top.

That lead matters for practical work. If you're using AI research, answer domain-specific questions, or build knowledge-base products, the model with the broadest and most accurate knowledge wins every time. Combined with its 1,000,000-token context window, Opus 4.6 can process entire documents that other models would need to chunk.

The gap between Opus 4.6 and GPT-4o on MMLU has implications beyond bragging rights. Models with stronger general knowledge produce more accurate summaries, catch more errors in fact-checking tasks, and give better answers to ambiguous questions. If you're building a customer support bot, a research assistant, or any product that handles diverse topics, that 3.6-point difference shows up in user satisfaction.

This is where Claude Opus 4.6 separates itself from every other model available.

On HumanEval (function-level code generation), Opus 4.6 scores 93.7% compared to GPT-4o's 90.2%. Both are strong, but Claude's 3.5-point lead means fewer broken functions and less time spent debugging AI-generated code.

SWE-bench Verified tells the bigger story. This benchmark measures whether a model can resolve real GitHub issues from popular open-source repositories — not toy problems, but actual bugs with messy codebases and complex dependencies. Claude Opus 4.6 scores 75.6% (bash, LM-only). OpenAI's o3 follows at 58.4%. Further down, GPT-4.1 manages 39.6%.

That 17-point gap between Opus 4.6 and o3 translates to meaningful productivity gains when you're running automated issue resolution at scale. And this tracks with developer community reports: tools like Claude Code and Cursor (which supports Claude as a backend) have gained serious traction because Claude's code output tends to need fewer correction cycles.

The 1M context window adds another edge for coding. When fixing bugs in large projects, loading entire modules or multiple related files into a single prompt produces better results than truncating context. GPT-4o's 128K window is generous, but for enterprise-scale codebases, Claude's nearly 8x larger context capacity makes a measurable difference in fix accuracy.

If coding is your primary use case, Opus 4.6 is the clear winner.

Claude's SWE-bench dominance — resolving real GitHub issues across messy codebases — is the benchmark that matters most for professional developers.

GPQA Diamond tests graduate-level science questions across physics, chemistry, and biology. Claude Opus 4.6 scores 91.3%, surpassing o3's 87.7% by 3.6 points. For reference, OpenAI's o1 scores 78% on this benchmark. A general-purpose model outperforming a specialized reasoning model on a reasoning-heavy test suggests Claude's broad training translates into strong scientific reasoning.

OpenAI's o3 dominates every math and reasoning benchmark in this comparison. The gaps aren't small.

On MATH (competition-level mathematics): o3 scores 96.7%, Opus 4.6 scores 85.1%. That's an 11.6-point gap. On GSM8K (grade school math word problems): o3 hits 99.2% while Opus 4.6 posts 97.8%.

For reference, DeepSeek R1 hits 83.5% on MATH, trailing Claude. The entire field is behind o3 on mathematical reasoning.

But context is important. o3 is a specialized reasoning model (it uses extended chain-of-thought processing), which typically means higher latency and higher cost per query. It's designed for problems that benefit from deliberate, multi-step thinking. Comparing it to a general-purpose model like Opus 4.6 isn't entirely fair; they're optimized for different jobs.

OpenAI's o1 model provides a middle option, scoring 94.8% on MATH. For teams that want solid math reasoning without o3-level costs, o1 is a practical choice.

So if math is your primary workload, o3 is the obvious pick. For everyone else, Claude Opus 4.6's math scores are more than adequate alongside its strengths in coding and knowledge.

ARC-AGI tests visual pattern-recognition puzzles that require genuine abstraction, not memorized training patterns. It's designed to measure reasoning that goes beyond what standard benchmarks capture.

o3 at high compute scores 87.5%. Claude Opus 4.6 scores 53%.

That 34.5-point gap is the widest in this entire comparison. DeepSeek R1 (~15.8%) trails even further behind, so Claude still beats most of the field. But o3 is operating on an entirely different level here.

For practical applications like coding, writing, and analysis, ARC-AGI performance doesn't have much direct impact. It's a research-oriented benchmark that tracks progress toward general reasoning capability. Still, the gap reveals a genuine limitation in Claude's abstract reasoning relative to OpenAI's specialized approach.

The Chatbot Arena (hosted at lmarena.ai, formerly LMSYS) uses blind human evaluations where users compare two anonymous model responses and pick the winner. It's the best proxy available for overall conversational quality.

As of April 2026, Claude Opus 4.6 sits at approximately 1,496 Elo, ranking near the top of the overall Text leaderboard. GPT-4o has dropped significantly in the rankings as newer models from OpenAI (GPT-5.x), Google (Gemini 3.x), and xAI (Grok 4.x) have entered the arena. Chatbot Arena Elo scores shift as new models join and voting patterns evolve.

Some users still prefer GPT-4o's conversational style. But on aggregate human preference, Claude Opus 4.6 currently leads by a wide margin.

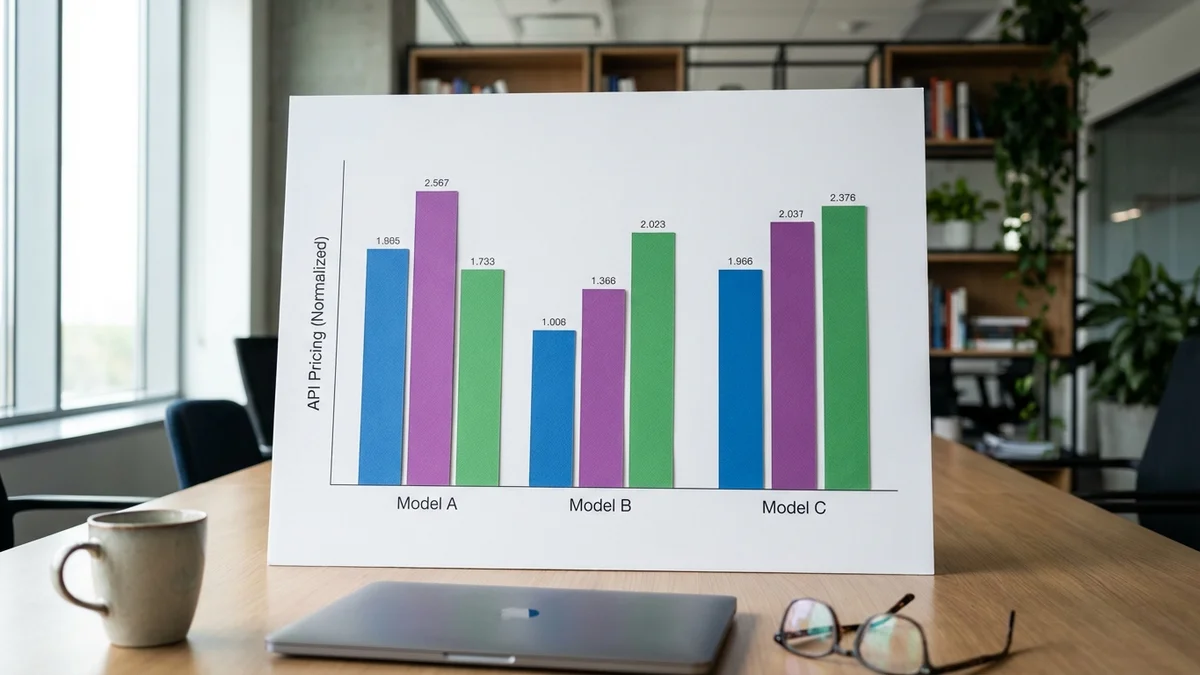

Performance data only tells half the story. Cost matters, especially at scale.

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context Window |

|---|---|---|---|

| Claude Opus 4.6 | $5.00 | $25.00 | 1,000,000 tokens |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 200,000 tokens |

| GPT-4o | $2.50 | $10.00 | 128,000 tokens |

GPT-4o costs roughly half as much as Claude Opus 4.6 on output tokens. For applications processing millions of tokens daily (and many production apps do), that gap compounds fast.

But lower price doesn't mean better value. Opus 4.6 outperforms GPT-4o on five of eight benchmarks and offers a 1M context window that's nearly 8 times larger than GPT-4o's 128K. If you're processing legal contracts, analyzing large codebases, or running multi-turn agent workflows, that extra context capacity directly improves output quality.

Claude Sonnet 4.6 deserves attention here. At $3/$15 per million tokens, it scores 89.5% on MMLU and competitive results on HumanEval, placing it between Opus and GPT-4o on capability while costing only slightly more than GPT-4o. For teams that want strong coding performance without Opus-tier pricing, Sonnet 4.6 is the smart pick.

One practical approach gaining traction: model routing. Send complex coding tasks to Opus 4.6, simpler queries to Sonnet or GPT-4o, and math-heavy workloads to o3. This gives you premium accuracy where it counts and cost savings everywhere else.

The Claude Opus 4.6 vs GPT-5 comparison doesn't produce a single champion. It produces two.

For coding, software engineering, and broad knowledge work, Claude Opus 4.6 is the best model you can use right now. Its 75.6% SWE-bench Verified score and 92.3% MMLU are both strong results. Developers who need accurate, production-ready code generation should default to Opus 4.6.

OpenAI's o3 owns mathematical and formal reasoning. With 96.7% on MATH and 87.5% on ARC-AGI, it handles quantitative problems that no other model comes close to matching.

And GPT-4o remains the value play. About half the price of Opus 4.6, it's the practical choice for cost-conscious teams building conversational products.

What about the rest of the field? DeepSeek R1 and V3 offer strong open-source alternatives, particularly for teams that need to self-host. Google's Gemini models (3.x series) are competitive but don't lead the benchmarks included in this comparison. But for API-based usage where you want the best possible results, the Anthropic and OpenAI comparison is still the main event.

The smartest approach for most teams isn't picking one model; it's using all three strategically. Route coding to Opus 4.6, math to o3, and high-volume chat to GPT-4o. This model routing pattern is becoming standard in production AI systems, and the benchmark data makes a clear case for why.

Sources

Yes, and it's increasingly common in production. Model routing lets you send coding tasks to Opus 4.6 and simpler queries to GPT-4o, optimizing both accuracy and cost. Orchestration tools like LiteLLM and OpenRouter support multi-model routing out of the box, making setup straightforward for most API-based architectures.

At $5 per million input tokens and $25 per million output tokens, a developer sending roughly 100 queries per day (averaging 2,000 input and 1,000 output tokens each) would spend about $105 per month. Lighter users at 10-20 queries per day would spend roughly $10-20/month. Heavy users running automated pipelines or processing long documents can reach $500+/month depending on volume and output length.

Yes, Claude Opus 4.6 is a multimodal model that accepts images, PDFs, and code files alongside text prompts. You can upload screenshots for UI debugging, architectural diagrams for analysis, or scanned documents for extraction. GPT-4o also supports image inputs, so both models work for multimodal workflows.

For general conversation, writing, and basic coding, o3 is overkill. Its chain-of-thought reasoning adds latency and cost that only pays off on math-heavy, logic-intensive, or multi-step analytical problems. Stick with GPT-4o or Claude Sonnet 4.6 for everyday tasks and reserve o3 for workloads that genuinely require deep quantitative reasoning.

Rankings can shift within months. Major releases from OpenAI, Anthropic, Google, and Meta regularly reshuffle leaderboards. Track live changes via Papers with Code for academic benchmarks, the Chatbot Arena leaderboard (lmarena.ai) for conversational quality, and SWE-bench for coding performance. Subscribing to these sources gives you a more reliable picture than any static comparison article.