Grammarly AI Cloned 100+ Writers — A $5M Lawsuit and an Apology

Superhuman's CEO sat for a Decoder interview with The Verge's editor — one of the writers Grammarly's AI cloned without permission. It got tense.

Superhuman's CEO sat for a Decoder interview with The Verge's editor — one of the writers Grammarly's AI cloned without permission. It got tense.

What do you do when the CEO of the company that turned you into an AI puppet shows up to record your podcast? If you're Nilay Patel, editor-in-chief of The Verge, you hit record and ask the hard questions anyway.

In a remarkably tense episode of the Decoder podcast, Patel sat down with Shishir Mehrotra, CEO of Superhuman (the company formerly known as Grammarly), to talk about the AI impersonation scandal that has rocked the writing tool industry. Grammarly's Expert Review feature cloned the editorial voices of real journalists, authors, and experts without their consent — and Patel was one of the people cloned. Mehrotra still showed up for the interview.

Credit where it's due: that takes guts.

This caught our eye. Grammarly's Expert Review was a $12-per-month add-on launched in August 2025 that promised writing feedback from AI-generated versions of real professionals. Users could upload their writing and receive editorial suggestions attributed to people like Stephen King, Kara Swisher, Neil de Grasse Tyson, and dozens of working journalists.

The catch? Not a single one of them agreed to it.

Grammarly's AI had been trained on publicly available writing from these individuals. It then produced stylistic and analytical suggestions framed as reflecting each person's editorial voice — their name attached, their credibility borrowed, their reputation monetized. This was AI impersonation dressed up as a premium feature.

"I've worked for decades honing my skills as a writer and editor, and I'm distressed to discover that a tech company is selling an imposter version of my hard-earned expertise." — Julia Angwin

Patel, along with Verge staffers Sean Hollister, Tom Warren, and David Pierce, discovered they were unwittingly "reviewing" documents they'd never seen. The list extended well beyond The Verge: Platformer's Casey Newton, WSJ's Joanna Stern, Wired's Lauren Goode, Bloomberg's Mark Gurman and Jason Schreier, The New York Times' Kashmir Hill, and The Atlantic's Kaitlyn Tiffany all appeared in the feature.

And the AI versions weren't even good at the jobs they were impersonating. According to Platformer, the AI clone of Angwin was "doling out horrible advice, like creating unwieldy sentences that made it harder to understand."

Pay attention here — the interview had been scheduled a month earlier — long before the Expert Review controversy blew up. Patel and Mehrotra were supposed to discuss AI, platforms, and creativity in broad terms. But then Verge reporters found their own names inside the feature, and suddenly the conversation became deeply personal.

Patel admitted he was surprised Mehrotra still showed up. And to his credit, the Superhuman CEO stuck it out through what became a pointed, uncomfortable exchange. As Patel put it, "it's clear we disagree about how extractive AI feels for people."

Mehrotra apologized during the interview — and he'd already apologized publicly. But apologies are easy. The harder part is answering why it happened in the first place.

"We hear the feedback and recognize we fell short on this." — Shishir Mehrotra

Some context on Mehrotra: he became Superhuman's CEO after the company acquired Coda, the productivity tool he co-founded. Before that, he served as chief product officer at YouTube. He sits on the board of directors at Spotify. This isn't some naive first-time founder who stumbled into an ethical minefield — which makes the decision to ship Expert Review without consent even harder to justify.

Interesting wrinkle: the fallout moved fast. After TechCrunch and other outlets reported on the feature in early March 2026, Superhuman initially offered a bare-minimum response: an email-based opt-out. But that wasn't close to enough.

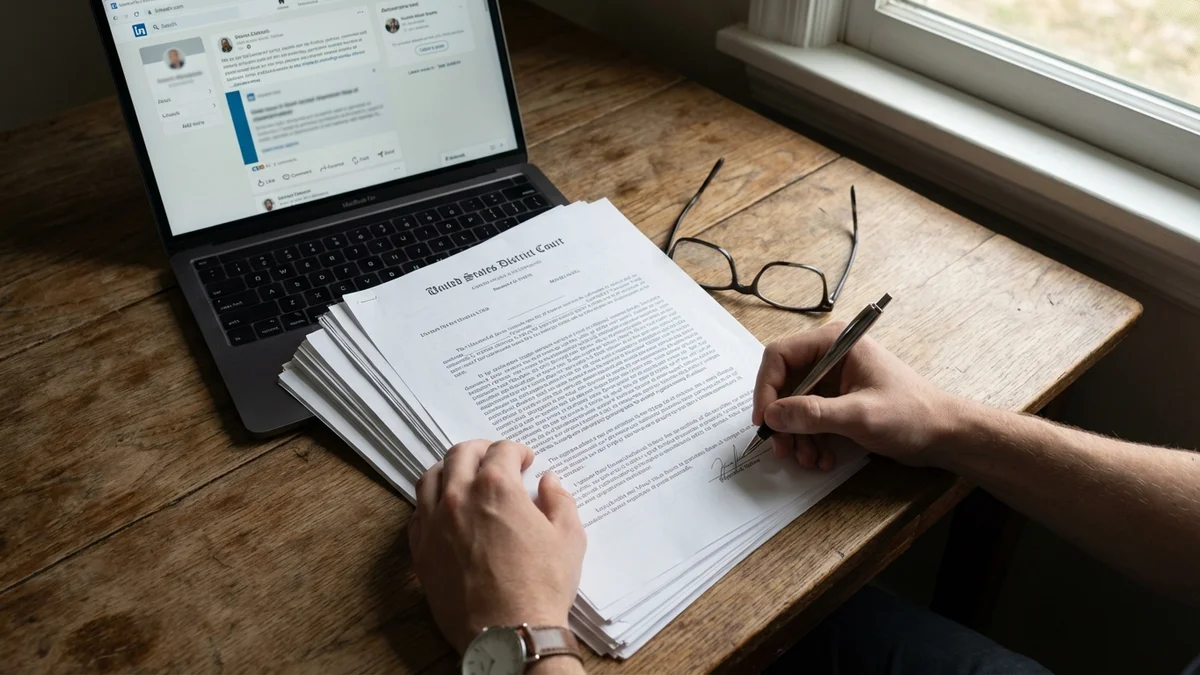

On March 11, 2026, Julia Angwin — the award-winning investigative journalist and former editor-in-chief of The Markup — filed a class action lawsuit in the Southern District of New York. The suit alleges Superhuman misappropriated the names and identities of hundreds of professionals for profit. It seeks at least $5 million in damages.

Here's the part that stings: Mehrotra posted his LinkedIn apology the same day the lawsuit dropped. But as Futurism reported, the apology made zero mention of the pending litigation. It read like a response to community feedback, not legal pressure. That's a credibility problem.

As of March 23, 2026, Expert Review has been completely disabled. Angwin's lawyer, Peter Romer-Friedman, says he's heard from 40 to 50 people who object to being featured in the tool. The class could grow significantly.

This isn't just a Grammarly story. It's a stress test for how the AI industry handles identity and consent.

We've spent years arguing about whether training AI on copyrighted text counts as fair use. But Expert Review crossed a different line entirely. It wasn't just using someone's writing to train a model — an issue that extends beyond writing into AI agent security — it was putting their name on AI-generated output and charging $12 a month for it. That's identity misappropriation, and it's a much cleaner legal argument than the murkier copyright debates around training data.

If you can train an AI on someone's writing and sell "their" editorial advice without asking, what's the limiting principle?

So this raises uncomfortable questions for every AI company building products around persona or expertise. Could an AI company clone a therapist's approach? A financial advisor's style? A professor's teaching methods? So far, the industry has operated on an implicit assumption that publicly available content is fair game for anything. Expert Review proves that assumption has sharp limits — especially when you slap real names on AI output.

As of March 23, 2026, Superhuman says it plans to "reimagine" Expert Review to give experts "real control over how they want to be represented — or not represented at all." Whether that means a consent-based opt-in or scrapping the concept entirely remains to be seen.

Several things are in motion as of March 23, 2026. The class action lawsuit is proceeding through the Southern District of New York. Superhuman is redesigning the feature (or possibly abandoning it). And the broader AI industry is watching closely to see whether Angwin v. Superhuman becomes a precedent-setting case for AI identity rights.

Mehrotra told Patel he was sorry. But apologies don't answer the fundamental question that Expert Review forced into the open: in an age where AI can clone anyone's voice, style, and expertise — who actually owns yours? The question of how much we should trust AI companies with our data isn't going away.

Sources

Yes. Only the Expert Review add-on was disabled. Grammarly's core grammar checking, tone suggestions, and writing assistance tools remain fully functional under the Superhuman brand. Your existing subscription is unaffected by the feature's removal.

The lawsuit was filed by Julia Angwin in the Southern District of New York. If your name or professional identity was used in Expert Review without your consent, you can contact the law firm PRF Law, which represents the plaintiff class. Angwin's lawyer Peter Romer-Friedman has already heard from 40 to 50 affected individuals as of March 2026.

Expert Review cost $12 per month on top of a standard Grammarly subscription. Users paid this fee believing they were getting editorial insights shaped by real human experts. Superhuman has since claimed the feature had 'very little usage' during its roughly seven-month lifespan from August 2025 to March 2026.

Superhuman says it plans to 'reimagine' the feature to give experts real control over their representation. No timeline has been announced. A consent-based opt-in system seems likely if the feature returns, but the ongoing $5 million class action lawsuit could complicate or delay any relaunch significantly.

Superhuman is the parent company that was formerly called Grammarly, Inc. It rebranded after acquiring the Superhuman email app and the productivity tool Coda. Grammarly is now the flagship writing product under the Superhuman umbrella, alongside Superhuman Mail and Coda. Shishir Mehrotra, formerly CEO of Coda, leads the combined company.