Google Opens Lyria 3 API: AI Music for 4 Cents a Track

Google Lyria 3 is now available to developers through the Gemini API at $0.04 per 30-second clip. Here's what you get, what's missing, and how it stacks up against Suno and Udio.

Google Lyria 3 is now available to developers through the Gemini API at $0.04 per 30-second clip. Here's what you get, what's missing, and how it stacks up against Suno and Udio.

For four cents, you can now generate a 30-second AI music track through Google's API. That's cheaper than a gumball.

Google announced today that Google Lyria 3 — its newest music generation model from DeepMind — is now available in paid preview through the Gemini API and for testing in Google AI Studio. At the same time, Lyria 3 Pro is expanding to Vertex AI and additional Google products like Google Vids and ProducerAI, bringing longer tracks and enterprise-grade audio generation to professional workflows. As of March 25, 2026, this makes Google the first major cloud provider to ship a dedicated music generation API with pricing this aggressive.

The message is pretty clear: Google wants AI-generated music baked into everything you build.

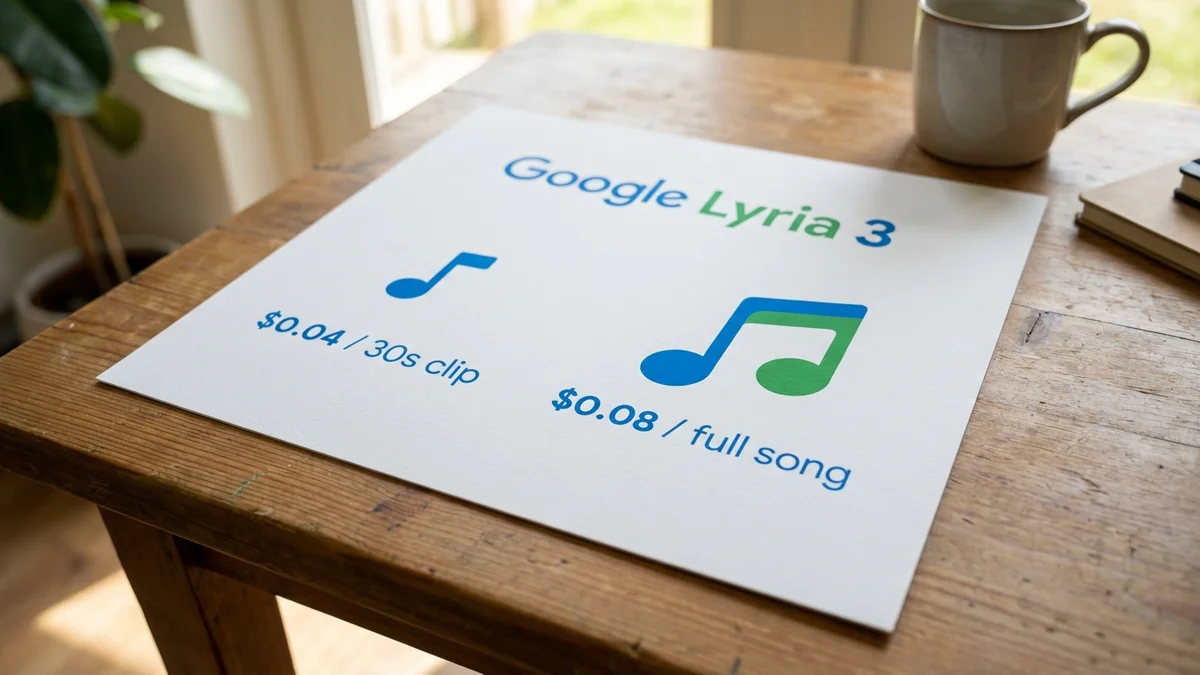

Interesting wrinkle: the Lyria 3 API costs $0.04 per 30-second clip and $0.08 per full song (up to roughly two minutes). There's no free tier — you pay from the first track. Both models are currently in paid preview, which means tighter rate limits than you'd get from a stable release.

Google has released two models under the Lyria 3 family:

| Model | Model ID | Max Duration | Price | Output Format |

|---|---|---|---|---|

| Lyria 3 Clip | lyria-3-clip-preview | 30 seconds | $0.04/track | MP3 |

| Lyria 3 Pro | lyria-3-pro-preview | ~2 minutes | $0.08/track | MP3, WAV |

Both output at 48k Hz stereo — that's genuine high-fidelity audio, not the compressed-sounding stuff you'd get from earlier models. And both come with mandatory SynthID watermarking, which we'll get to.

Lyria 2 launched in 2025, and it was... fine. Lyria 3 is a significant step up in three specific ways.

First, it generates lyrics automatically. With Lyria 2, you had to write your own. Now you describe what you want in plain language and the model handles both music and words. Second, you get much finer creative control over style, vocals, tempo, and instrumentation. Third, the musical structure is actually coherent — tracks sound like songs with proper intros, verses, choruses, and bridges.

You can prompt for specific musical structures using tags like

[Verse],[Chorus], and[Bridge], or use timestamp notation like[00:00 - 00:30]to control exactly what happens when.

But what really sets this apart from consumer-facing tools is the multimodal input. You can feed Google Lyria 3 up to 10 images alongside a text prompt, and it will compose music that matches the visual mood. Think: an app that auto-generates soundtracks for photo slideshows, or a game engine that scores cutscenes dynamically. The image-to-music feature is something neither Suno nor Udio offers.

The Lyria 3 API follows the same patterns as the rest of the Gemini API, so if you've built with Gemini before, this will feel familiar. You authenticate with an x-goog-api-key header, hit the standard generateContent endpoint, and get back a multipart response containing both text (lyrics and structure descriptions) and base64-encoded audio.

Here's the basic request shape:

{

"contents": [{"parts": [{"text": "An upbeat indie rock track at 120 BPM in E major with female vocals"}]}],

"generationConfig": {

"responseModalities": ["AUDIO", "TEXT"]

}

}

SDKs are available for Python, JavaScript, Go, Java, and C# — plus raw REST if that's your preference. Google AI Studio lets you prototype and test prompts in the browser before writing any code, which is genuinely helpful for dialing in your prompt templates.

For the best results, Google recommends packing your prompts with specifics: genre, instruments, BPM, key and scale, mood, and structure tags. Want instrumental only? Just add "Instrumental only, no vocals" to your prompt. Need lyrics in French or Japanese? As of March 2026, Lyria 3 generates lyrics in whatever language you write your prompt in — Google's docs don't enumerate a specific list, but multilingual support is built in.

So here's the catch (and there's always a catch). These are still preview models. Single-turn generation only — you can't iteratively edit a track. And results vary between identical prompts, which makes reproducibility a real concern if you're building something deterministic.

For developers building production applications, the lack of iterative editing is the biggest limitation right now. You generate, you evaluate, you regenerate from scratch. There's no "change just the bridge" option.

Pay attention here — no sugarcoating: Google Lyria 3 isn't entering an empty market. Suno has raised over $125 million in funding and has been the dominant AI music generator for over a year. Udio v4 has carved out its own niche with producer-level editing tools.

Here's how they compare as of March 2026:

| Feature | Lyria 3 Pro | Suno v5 | Udio v4 |

|---|---|---|---|

| Max Track Length | ~2 min | 8 min | 15 min |

| Vocal Quality | Good | Best-in-class | Very good |

| Stem Export | No | Multi-stem | Multi-stem |

| Image-to-Music | Yes (up to 10 images) | No | No |

| Developer API | Yes (Gemini API) | Limited | Limited |

| Watermarking | SynthID (mandatory) | None | None |

| Per-Track Pricing | $0.04–$0.08 | Subscription | Subscription |

Suno v5 still wins on raw audio quality and vocal realism — it consistently ranks at the top of community listening tests. Udio v4 offers the most producer-friendly workflow with section-by-section regeneration, inpainting, and stem separation.

So where does Lyria 3 actually win? API-first development and ecosystem integration. Neither Suno nor Udio offers anything close to this developer experience. If you're building an app that needs music generation as a feature (not as the product itself), Lyria 3 is the obvious pick. And at four to eight cents per track, the economics are kind of hard to argue with.

The real competition isn't Lyria vs. Suno for music creation. It's Lyria making music generation a commodity feature that any app can bolt on for pennies.

Every single track generated by Google Lyria 3 gets an imperceptible SynthID watermark woven into the audio at the point of creation. You can't strip it out, and it doesn't affect listening quality. Users can verify watermarks by uploading tracks back to Gemini.

This is Google playing it safe — and playing it smart. While Suno and Udio have both faced major copyright lawsuits from record labels (RIAA filed suits against both in June 2024), Google has largely stayed out of music copyright courtrooms. The mandatory watermark gives them a defensible position: every AI-generated track is traceable and identifiable. Safety filters also block requests that mimic specific artists' voices or attempt to reproduce copyrighted lyrics. This kind of guardrailing is becoming standard — Grammarly recently faced a $5M lawsuit over unauthorized use of writers' content for AI training.

But mandatory watermarking also means your users can't pass off Lyria-generated tracks as human-made content. Depending on your use case, that's either a feature or a limitation. (For more on how AI platforms handle safety and content tracing, see 5 Ways OpenAI Protects Sora 2 Users — And 3 Gaps.)

The pricing is going to turn heads. At $0.04 for a 30-second clip, you could generate 25,000 tracks for a thousand dollars. For background music in apps, games, videos, and social content, that math works really well.

As of March 25, 2026, Lyria 3 is available in Google AI Studio for testing and through the Gemini API for paid preview. Lyria 3 Pro is in public preview on Vertex AI for enterprise customers who need on-demand audio at scale — think gaming studios, video platforms, and creative tool companies.

And honestly? The most interesting applications probably haven't been thought of yet. When you make music generation this cheap and this accessible through a standard API, you're handing developers a new building block. Game engines that compose dynamic soundtracks. Social apps that generate personalized audio. Accessibility tools that turn visual content into sound. The API is the starting gun.

Sources

Yes, music generated through the Lyria 3 API can be used commercially, but every track carries a mandatory SynthID watermark that permanently identifies it as AI-generated. This watermark cannot be removed and is detectable by Google's verification tools. Check Google's terms of service for specific commercial use restrictions, as preview API terms may differ from general availability.

No, there is no free tier for the Lyria 3 API. You pay $0.04 per 30-second clip and $0.08 per full song from the first request. However, you can test prompts and hear sample outputs in Google AI Studio before committing to API integration. Lyria 3 is also available for free (with usage caps) through the consumer Gemini app if you just want to experiment.

Google offers a separate model called Lyria RealTime (experimental) through the Gemini API that supports WebSocket-based streaming for interactive, continuously steerable music generation. This is distinct from the standard Lyria 3 Clip and Pro models, which generate complete tracks in a single request. Lyria RealTime is designed for applications where users need to steer music in real time, like live performances or interactive games.

No, as of March 2026, Lyria 3 only outputs finished audio files — MP3 for Lyria 3 Clip and MP3 or WAV for Lyria 3 Pro. There is no MIDI export, stem separation, or multi-track output. If you need individual stems for mixing, Suno v5 and Udio v4 both offer stem export features that Lyria currently lacks.

No. SynthID is embedded directly into the audio signal at generation time and is designed to survive common audio transformations like compression, format conversion, and re-encoding. Google built it to be imperceptible to human listeners but reliably detectable by their verification systems. This is non-optional for all Lyria 3 API output.