Qwen3.5 vs Gemma4: 4 Models Tested for Local Coding

We break down benchmarks across all four Qwen3.5 and Gemma4 variants for local agentic coding on a 4090 — speed, code quality, VRAM, and context. One clear winner emerges.

We break down benchmarks across all four Qwen3.5 and Gemma4 variants for local agentic coding on a 4090 — speed, code quality, VRAM, and context. One clear winner emerges.

Google dropped Gemma4 on April 2nd, and the r/LocalLLaMA community had one immediate question: does it beat Qwen3.5 for local agentic coding on consumer hardware?

The short answer is no. But the longer answer — involving four models, two architectures, and some surprising tradeoffs — is a lot more interesting than a simple head-to-head. Developer Aayush Garg ran a thorough set of benchmarks comparing Qwen3.5 vs Gemma4 across both their dense and MoE variants, using real agentic coding tasks with Open Code on an RTX 4090. The results tell a clear story.

Qwen3.5-27B is the best model for local agentic coding on a 24GB GPU. It produces the cleanest code, gets complex multi-step tasks right on the first attempt, and fits comfortably in VRAM with a 130K context window. If you have an RTX 4090 or 3090 and want reliable agentic coding, this is your model.

Gemma4-31B ties on task accuracy but is crippled by a 65K context limit on the same hardware. The MoE variants from both families are roughly 3x faster — but they choke on harder tasks.

| Feature | Qwen3.5-27B | Gemma4-31B | Qwen3.5-35B-A3B | Gemma4-26B-A4B |

|---|---|---|---|---|

| Architecture | Dense | Dense | MoE (3B active) | MoE (4B active) |

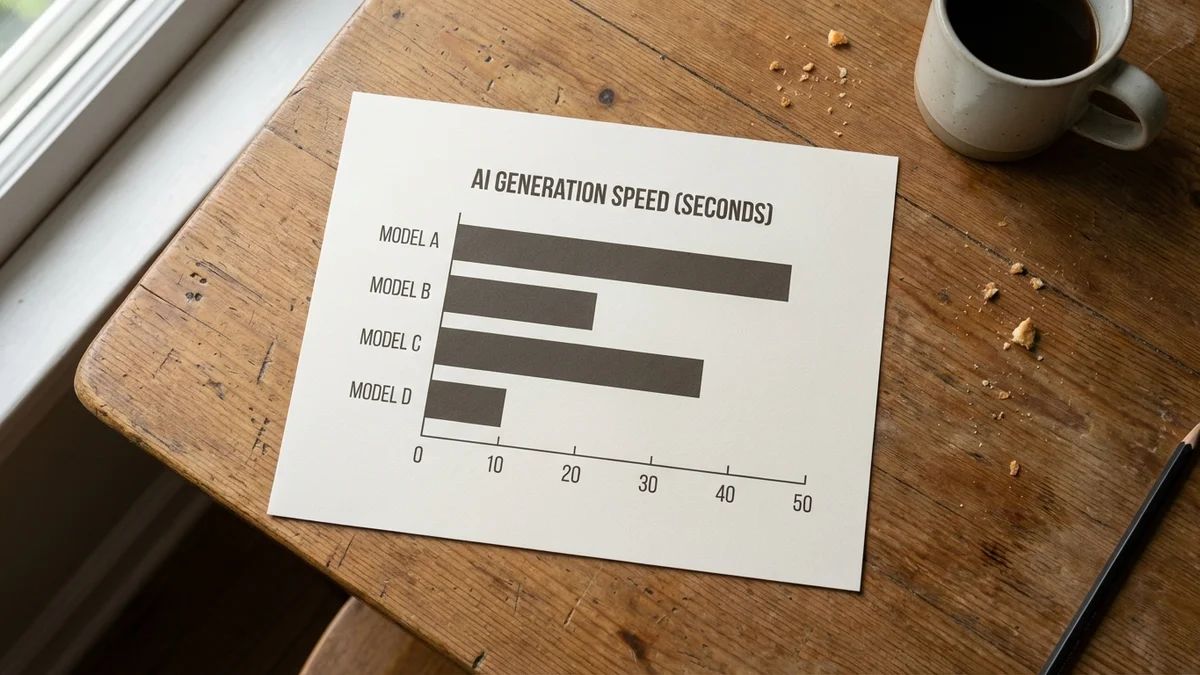

| Gen Speed | ~45 tok/s | ~38 tok/s | ~136 tok/s | ~135 tok/s |

| Task Accuracy | 1st (correct) | 1st (correct) | 2nd (retries needed) | 3rd (retries needed) |

| Code Quality | Cleanest overall | Clean but shallow | Best structure, wrong API | Weakest |

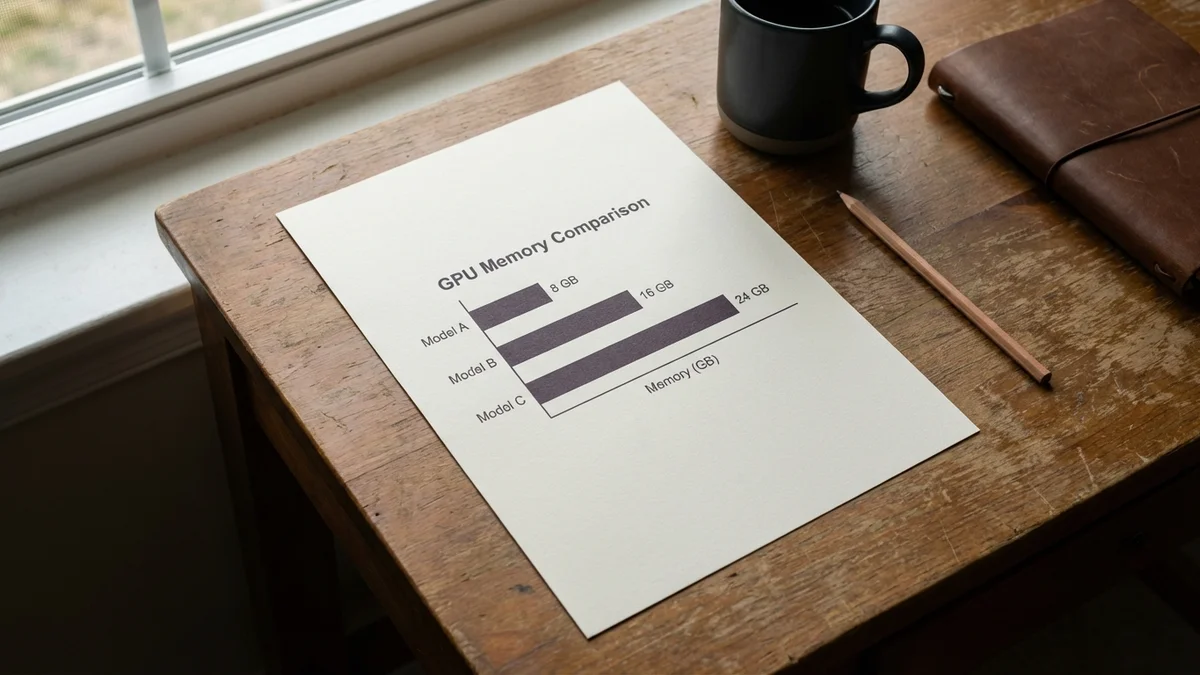

| VRAM Usage | ~21 GB | ~24 GB | ~23 GB | ~21 GB |

| Max Context (4090) | 130K | 65K | 200K | 256K |

All benchmark data comes from testing by Aayush Garg, originally shared on r/LocalLLaMA.

The speed gap is dramatic. Gemma4-26B-A4B and Qwen3.5-35B-A3B — both Mixture-of-Experts architectures — hit around 135-136 tokens per second. The dense models clock in at 38-45 tok/s.

That's a 3x speed advantage. And in agentic coding, where your model is reading files, planning multi-step changes, writing code, running tools, and iterating on failures, speed directly translates to how long you sit waiting. A model generating at 135 tok/s feels responsive. A model at 38 tok/s feels like you're watching code being typed by a careful (if talented) intern.

But speed is only useful when the output is correct.

Speed without accuracy is just fast failure. Both dense models got the complex coding task right on the first try. Neither MoE model did.

So the MoE models shine for simpler, high-volume tasks — quick refactors, straightforward feature additions, boilerplate generation. For complex multi-step workflows where getting it right the first time saves you from expensive retry loops? Dense models earn back their speed penalty and then some.

This is the section that matters most if you care about actually shipping the code your local model writes. According to the benchmarks, Qwen3.5-27B produced the cleanest output across all four models — correct API model names, proper type hints, docstrings, and pathlib usage. It's the kind of code you'd actually want to merge without heavy editing.

Gemma4-31B also passed the complex task on its first attempt, but the code was described as "clean but shallow." Functional, sure. But missing the thoughtful touches — the kind of small details that separate code-you-commit from code-you-rewrite.

And the MoE variants? A mixed bag, honestly.

Qwen3.5-35B-A3B actually had the best code structure of any model tested. Great organization, clear separation of concerns. But it hardcoded an API key directly into tests and used the wrong model name. That's the kind of mistake that passes CI and then becomes a production incident at 2 AM. Structure without correctness is just well-organized bugs.

Gemma4-26B-A4B produced the weakest code quality overall. Not broken, exactly, but clearly the least polished.

Here's a detail that should make you smile (or wince, depending on your testing philosophy): none of the four models actually followed Test-Driven Development when explicitly asked to. Every single one claimed to use red-green methodology, then promptly wrote integration tests that hit real APIs instead of writing failing unit tests first.

So if strict TDD compliance matters to your workflow, you'll need much more explicit prompting — or just accept that current local models treat TDD instructions the way most developers treat TDD instructions. Which is to say, aspirationally.

If you're running these models on your own GPU, two numbers dominate every decision: how much VRAM does it eat, and how much context can you actually use?

As of April 6, 2026, all four models technically fit on a 24GB card, but "fit" is doing different amounts of work:

This is where Gemma4-31B hits a wall. Despite matching Qwen3.5-27B on task accuracy, it can only fit a 65K context window on a 4090 while maintaining acceptable generation speed. That's a serious limitation for agentic coding, where your context fills up fast with file contents, tool call results, error messages, and conversation history.

Qwen3.5-27B gets you 130K context — double what Gemma4-31B offers. The MoE models go even higher: 200K for Qwen3.5-35B-A3B and a massive 256K for Gemma4-26B-A4B.

Gemma4-31B is like a sports car with a tiny fuel tank — fast and capable, but you're constantly pulling over to refuel.

For agentic coding specifically, context length isn't a nice-to-have. It's load-bearing infrastructure. When your agent needs to read three files, plan a multi-file refactor, and iterate on compiler errors — all within one session — 65K fills up shockingly fast.

As of April 6, 2026, both Qwen and Google are shipping models in both MoE and dense configurations. The tradeoffs are remarkably consistent across both families.

Dense models (Qwen3.5-27B, Gemma4-31B):

MoE models (Qwen3.5-35B-A3B, Gemma4-26B-A4B):

The pattern tells a consistent story. MoE architectures are like sprinters — incredibly fast over short, well-defined distances. Dense models are marathon runners — slower per step, but they handle the long, complicated routes without falling apart.

For agentic coding, "complicated routes" is basically the job description. Your model needs to understand a codebase, reason about dependencies, generate correct code across multiple files, and self-correct when things go wrong. That's dense model territory.

But if you're using a local model for simpler coding assistance — autocomplete, single-function generation, quick explanations — the MoE speed advantage is hard to ignore. A model that responds 3x faster just feels better to work with, even if it occasionally needs a nudge.

This is the default recommendation. Cleanest code, first-try accuracy on complex tasks, 21 GB VRAM, 130K context. It does everything well and nothing poorly. If you only run one local coding model on your 4090, make it this one.

On a 4090, the 65K context limit makes it hard to recommend over Qwen3.5-27B. But if you have an A6000 (48GB) or similar professional card, Gemma4-31B becomes much more interesting — same first-try accuracy with room for proper context allocation. It's a capable model held back by consumer hardware constraints.

At 136 tok/s with 200K context, this MoE model is excellent for high-throughput work where occasional retries are acceptable. Just watch the verbosity — it generated 32K tokens on a single complex task, which eats into your context budget fast. And double-check the code it writes for correctness (wrong model names, hardcoded keys).

With 256K context and 135 tok/s, this is the reach pick. But it ranked last on both task accuracy and code quality, which is a tough sell. Potentially useful for code analysis, search, or summarization tasks where you need to ingest a lot of code without generating much. For actual code generation? The others are better.

The cloud heavyweights are still ahead on raw capability — Claude Opus 4.5 hits 76.8% on SWE-bench Verified, and models like o3 reportedly score over 96% on MATH benchmarks. No local model on a single consumer GPU is touching those numbers.

But that's beside the point. Local models serve a different need entirely: privacy, zero marginal cost after hardware, offline access, and full control. And for that use case, a model like Qwen3.5-27B running on a single consumer GPU and getting complex agentic tasks right on the first try is genuinely impressive. A year ago, this level of local performance wasn't close to possible. For a closer look at how the smaller variants stack up, see our Qwen3.5-9B benchmark analysis.

The question isn't whether local models beat the cloud. It's whether they're good enough for your workflow. For a lot of developers in April 2026, Qwen3.5-27B clears that bar.

The original benchmarks used Open Code as the agentic coding frontend. Other capable options include Aider for terminal-based pair programming and Cline for VS Code users who want an autonomous agent. For inference backends, llama.cpp remains the go-to for local GPU inference, with Ollama offering a simpler setup experience. For more options to run on your own hardware, check out our guide to the best GGUF models for local use in 2026.

The RTX 4090 is still the sweet spot for this kind of work — 24 GB of VRAM is enough for all four models tested here, and the CUDA performance is excellent. An RTX 3090 gives you the same VRAM on older architecture, which works fine if you already own one.

Comparing Qwen3.5 vs Gemma4 across four model variants, the winner is clear. Qwen3.5-27B is the best local model for agentic coding on a 24GB consumer GPU as of April 6, 2026.

It's not the fastest option. It doesn't have the biggest context window. But it gets hard tasks right on the first try, writes the cleanest code of the bunch, and fits comfortably on the most common enthusiast hardware. That combination of reliability, quality, and practicality is exactly what matters for a local coding model you depend on daily.

Gemma4 is a solid release from Google — and the MoE variant's 256K context is impressive on paper. But on the metrics that actually decide whether you ship code or fight with your tools — first-try accuracy and code quality — Qwen3.5 delivers more.

If Google tightens up Gemma4-31B's VRAM efficiency or you upgrade to a 48GB card, the calculus changes. But for the typical developer with a 4090 running agentic workflows today, Qwen3.5-27B is the one to run.

Sources

Not at full precision — Qwen3.5-27B needs around 21 GB of VRAM. You'd need aggressive quantization (Q3 or lower) which significantly degrades code quality. For 16GB cards, look at smaller models like Qwen3.5-9B or consider the MoE variants with offloading, though agentic coding performance will be noticeably weaker than what's benchmarked here on 24GB hardware.

Qwen3.5-35B-A3B generated 32K tokens on a single complex task — roughly 3-4x more than typical dense model output. This matters because agentic coding tools maintain conversation context, and verbose output fills your context window faster. With a 200K max context on a 4090, you might get 5-6 complex turns before hitting limits, compared to 10+ turns with the less verbose Qwen3.5-27B at 130K context.

Yes, Gemma4 models support structured tool calling out of the box — Google designed them with agentic workflows in mind. Both the 31B dense and 26B-A4B MoE variants can handle function-call formatted prompts. The issue isn't capability but execution quality: in these benchmarks, Gemma4's tool use accuracy on complex multi-step tasks lagged behind Qwen3.5-27B, particularly for the MoE variant.

For Qwen3.5-27B and Gemma4-26B-A4B at ~21 GB, Q4_K_M quantization via llama.cpp or GGUF format hits the sweet spot — good quality retention while leaving enough VRAM headroom for context. Gemma4-31B at ~24 GB already pushes the 4090 to its limit, so Q4_K_S or even Q3 may be needed if you want context above 65K. Always benchmark your specific use case, as quantization impact varies by task type.

Likely yes, especially for context window limits. Inference backends regularly ship optimizations like FlashAttention improvements and better KV-cache management that can increase usable context at the same VRAM budget. Gemma4-31B's 65K context ceiling on a 4090 could improve with better memory management. Generation speed is less likely to change dramatically — the 3x gap between MoE and dense is architectural, not a software limitation.