Claude Sonnet 4.6 vs GPT-4o: 7 Honest Trade-Offs

Claude Sonnet 4.6 wins on coding and reasoning. GPT-4o wins on speed, latency, and price. Here is the data-backed breakdown for picking the right one in 2026.

Claude Sonnet 4.6 wins on coding and reasoning. GPT-4o wins on speed, latency, and price. Here is the data-backed breakdown for picking the right one in 2026.

Pick the wrong model and you either burn cash or ship slop. That's the actual stakes of the Claude Sonnet 4.6 vs GPT-4o question, and the answer isn't what most listicles will tell you.

The short version: Claude Sonnet 4.6 is the smarter model on coding and long-context reasoning, while GPT-4o is the faster, cheaper workhorse for chat, classification, and high-volume pipelines. Neither is universally "better." Anyone selling you that line is selling you something.

Let's break this down with actual numbers, real pricing, and an honest take on where each one falls apart.

If you're shipping a coding agent, an IDE plugin, or anything that touches a 100k-token codebase, Claude Sonnet 4.6 is the right call. Its SWE-bench Verified score of 80.2% (self-reported by Anthropic) crushes the GPT-4o-class lineup in agentic tasks, and it handles long files without the attention dropoff that legacy GPT-4o exhibits past 32k tokens.

If you're running a customer support bot, doing classification at scale, or building anything where p50 latency matters more than the last 3% of quality, GPT-4o still earns its keep. It's roughly 2x faster on streaming tokens and about 25% cheaper on input than Sonnet 4.6.

And if you're doing pure chat where users just want a friendly, capable assistant? Honestly, flip a coin. The LMSYS Chatbot Arena puts them within striking distance of each other for general chat, which most users can't tell apart in blind tests.

| Spec | Claude Sonnet 4.6 | GPT-4o |

|---|---|---|

| Context window | 200,000 tokens (1M in API beta) | 128,000 tokens |

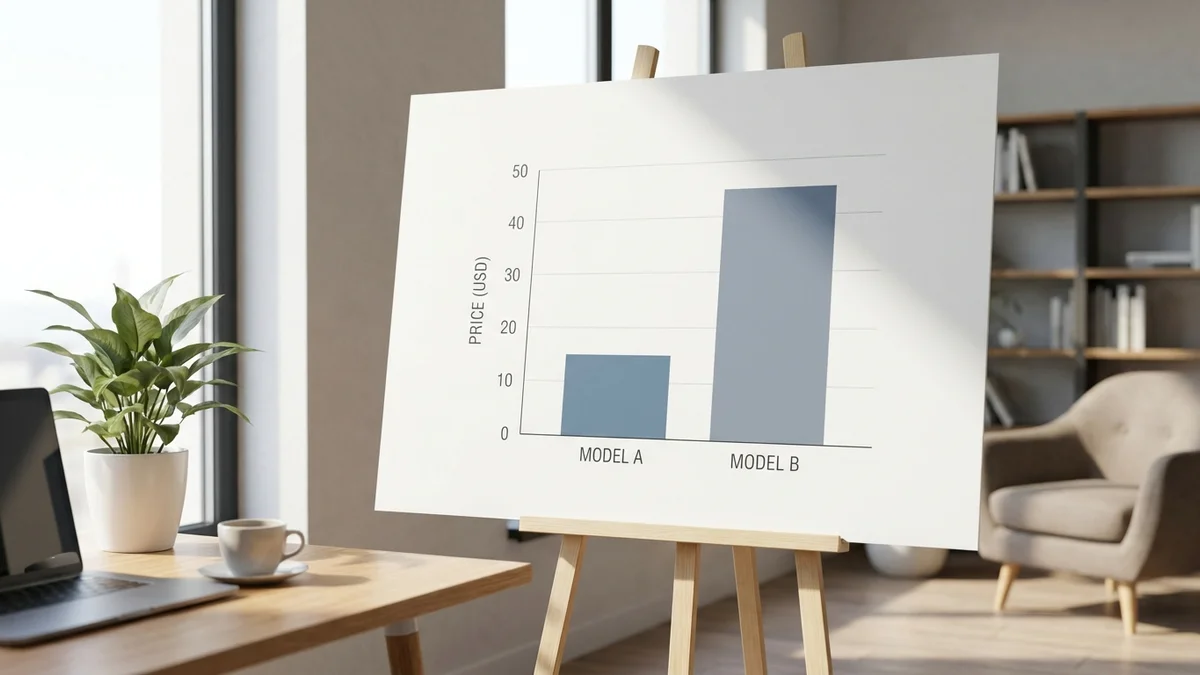

| Input price | $3 / M tokens | $2.50 / M tokens |

| Output price | $15 / M tokens | $10 / M tokens |

| SWE-bench Verified | 80.2% (Anthropic self-reported) | 33.2% (per OpenAI; GPT-4.1: 54.6%) |

| Vision input | Yes | Yes (native multimodal) |

| Audio input | No | Yes (native) |

| Tool use / function calling | Excellent | Very good |

A few things jump out. GPT-4o tends to feel more polished in casual chat, but Sonnet 4.6 is the one developers reach for when actual code has to compile.

This is the cleanest win in the entire comparison. GPT-4o was engineered as OpenAI's omni-modal speed-tier model, and it shows. Independent measurements from third-party providers like Artificial Analysis consistently put GPT-4o at roughly 90-110 output tokens per second, while Claude Sonnet 4.6 lands closer to 55-75 tokens per second depending on prompt complexity.

For a 2,000-token completion, that's the difference between a 22-second wait and a 36-second wait. Not a huge gap on a single request. Pretty massive when you're running 50,000 of them a day.

GPT-4o also wins on time-to-first-token, which is the metric that actually matters for streaming UX. Users perceive responsiveness from when characters first appear, not when the response finishes. GPT-4o typically starts streaming in 400-600ms; Sonnet 4.6 takes 700-1000ms (and sometimes longer on complex prompts).

So if your product is a chat interface where users sit and watch tokens render, GPT-4o feels noticeably snappier. That's real.

But speed only matters if the output is correct. And on the benchmarks that map to real engineering work, Sonnet 4.6 pulls ahead.

This is the headline difference. Per Anthropic's own benchmarks, Claude Sonnet 4.6 reports 80.2% on SWE-bench Verified (10-trial average, with a prompt modification), the benchmark that measures whether a model can actually resolve real GitHub issues end-to-end. GPT-4.1 (the GPT-4o successor in OpenAI's lineup) scores 54.6% per OpenAI. GPT-4o itself is at 33.2% on the same benchmark, meaningfully behind both. Note that Sonnet 4.6 has not yet been independently scored on the public SWE-bench leaderboard as of this writing.

HumanEval, the older small-function benchmark, is saturated and doesn't predict performance on real codebases — SWE-bench does. And if you have used both models in Cursor or Claude Code, the gap is obvious within an hour.

Sonnet 4.6 holds an edge on long-context reasoning tasks. The 200k context window isn't just bigger; it's more usable. Anthropic reports strong needle-in-a-haystack retrieval deep into the context window, while GPT-4o tends to degrade past the 64k mark in community evals.

GPT-4o is, frankly, the more pleasant chat partner for many casual users. Its prose feels looser and more natural, while Sonnet 4.6 can come across as more formal (some users like that, some don't).

List prices are deceptive. Let's run the actual math for a typical workload.

| Workload | Claude Sonnet 4.6 | GPT-4o |

|---|---|---|

| 1M input tokens | $3.00 | $2.50 |

| 1M output tokens | $15.00 | $10.00 |

| Typical 70/30 mix (1M total) | $6.60 | $4.75 |

| Heavy output (50/50) | $9.00 | $6.25 |

| With prompt caching (Anthropic 90% discount on cache reads) | ~$1.20 effective | $1.25 effective (50% discount) |

GPT-4o is straightforwardly cheaper at list price, roughly 28-30% less for a typical mix. But Anthropic's prompt caching is more aggressive: cache reads are 90% off versus OpenAI's 50% off. If you're running an agentic workflow that re-sends the same system prompt and tool definitions on every turn, Sonnet 4.6 with caching can actually be cheaper than GPT-4o without it.

One more wrinkle. Sonnet 4.6 produces fewer tokens for the same task on average, because Anthropic's models tend to be less verbose by default. So your output bill on Sonnet can be 10-15% lower than the per-token math suggests. Not a huge deal but worth knowing.

GPT-4o's 128k context is fine for most chat. It's not fine for what developers actually do with frontier models in 2026: dump entire codebases, paste in 50-page legal docs, or feed the model a long meeting transcript.

Sonnet 4.6's 200k window is 56% larger, and crucially, it stays coherent at the high end. Anthropic publishes long-context evals showing strong retrieval deep into the window. GPT-4o's effective context (where it actually uses what you give it) is closer to 80-100k in practice, based on community benchmarks like RULER.

If you're doing RAG over long documents, this isn't a small thing. It's the entire ballgame.

Both models do function calling well. Both support parallel tool calls. Both work with structured outputs.

But Sonnet 4.6 is noticeably better at multi-step agent loops. It's more likely to recover from a failed tool call, more willing to ask clarifying questions, and significantly less prone to hallucinating tool names or argument formats. This is the unsexy reason every serious agent framework (Cline, Aider, Claude Code) defaults to Anthropic models.

GPT-4o's function calling is fine for simple workflows: classify this, call this API, return the result. It starts to wobble around 5-7 tool calls deep into an agent loop, where Sonnet 4.6 will keep going for 20+ steps without losing the plot.

GPT-4o was built natively multimodal. It accepts text, images, audio, and (in some endpoints) video, all through a single model with a unified embedding space. That gives it real advantages for audio understanding, real-time voice chat, and any application where speech is the primary interface.

Claude Sonnet 4.6 handles text and images but not audio natively. If you're building a voice agent or a transcription-aware app, GPT-4o is the obvious pick (or you wire up a separate STT layer in front of Sonnet, which works fine but adds latency).

For pure vision tasks (screenshots, charts, document OCR), the two models are roughly comparable, with Sonnet 4.6 edging slightly ahead on detailed chart reading and GPT-4o slightly ahead on natural photos.

The honest truth is that for 60% of LLM use cases in 2026, you can throw a dart at either model and ship something that works. The 40% where it matters is where this comparison earns its keep.

GPT-4o is, candidly, a 2024-vintage model. OpenAI has shipped GPT-4.1 and the o-series reasoning models since then, and any honest comparison should note that. If you're choosing today between Sonnet 4.6 and the broader OpenAI lineup, GPT-4.1 is closer in capability on coding (54.6% on SWE-bench, basically tied) and o3 dominates on math and hard reasoning.

But GPT-4o is still the OpenAI default for chat, still the model behind most ChatGPT free-tier traffic, and still the workhorse in countless production apps. So the comparison is fair, just incomplete without that footnote.

For the most current model lineup, check Anthropic's pricing page and OpenAI's models page directly.

Claude Sonnet 4.6 is the better model. GPT-4o is the faster, cheaper one. That framing covers 90% of the decision.

If your product cares about output quality, agentic reliability, long context, or coding accuracy, the per-token premium for Sonnet 4.6 pays for itself within a week of shipping. The reduction in retry loops, hallucinations, and "why is the model ignoring my instructions" tickets is real and measurable.

If your product is high-volume, latency-sensitive, or audio-native, GPT-4o is still the move. The price gap matters at scale, the speed gap matters in chat UX, and the multimodal capabilities are genuinely ahead.

And if you're still on the fence? Run both for a week through OpenRouter on your actual workload before committing. Vibes-based model selection is how teams end up with the wrong default for 18 months.

Sources

For coding, agents, and long-context work, yes. The per-token premium is roughly 30%, but Sonnet 4.6's prompt caching gives 90% off cache reads (versus OpenAI's 50%), which often makes Sonnet effectively cheaper on workloads that re-send system prompts. For high-volume classification or chat, GPT-4o's lower list price wins.

Yes, through aggregators like OpenRouter, LiteLLM, or Vercel's AI SDK, all of which expose both models behind a unified interface. This is genuinely the right setup if you want to A/B test or fall back between providers. Native APIs (Anthropic Messages API, OpenAI Chat Completions) have meaningfully different request shapes.

GPT-4o receives security patches and minor improvements but is no longer OpenAI's flagship model. GPT-4.1 and the o-series (o1, o3) are where active development goes. If you are starting a new project today and locked into OpenAI, GPT-4.1 is a closer apples-to-apples competitor to Sonnet 4.6 than GPT-4o.

GPT-4o has a slight edge in low-resource languages and audio for non-English speech, thanks to its native multimodal training. Claude Sonnet 4.6 is competitive in major languages (Spanish, French, German, Mandarin, Japanese) and often better at preserving formatting in translations. For tier-2 languages like Vietnamese or Tagalog, GPT-4o is generally more reliable.

Anthropic's tier system scales with usage history and typically requires 7-14 days at each tier before promotion. OpenAI tends to scale faster but with stricter org-level monitoring. For production workloads, both providers offer enterprise plans with dedicated capacity, and serious teams typically run multi-provider with automatic failover via OpenRouter or a custom router.