Ollama vs LM Studio vs llama.cpp: 5 Speed Tests Ranked

llama.cpp beats Ollama by 8–15% in raw token generation, but speed isn't everything. Here's how all three local LLM runners compare across the metrics that actually matter.

llama.cpp beats Ollama by 8–15% in raw token generation, but speed isn't everything. Here's how all three local LLM runners compare across the metrics that actually matter.

Here's something most local LLM guides won't tell you upfront: for GGUF text models, Ollama, LM Studio, and llama.cpp all share the same inference foundation. When you run a quantized Llama 3.1 or Mistral through any of the three, the tokens flow through llama.cpp's ggml backend. So the real question isn't which engine is fastest — it's how much speed each wrapper leaves on the table.

Based on community benchmarks and architectural analysis, the differences are measurable. And for some use cases, they genuinely matter.

The headline result: llama.cpp raw is the fastest option for pure token generation, beating Ollama by 8–15% and LM Studio by 2–5% in most GPU-accelerated tests. But speed isn't the whole story — Ollama's daemon architecture gives it the best time-to-first-token for repeated queries, and LM Studio offers the best experience for model experimentation.

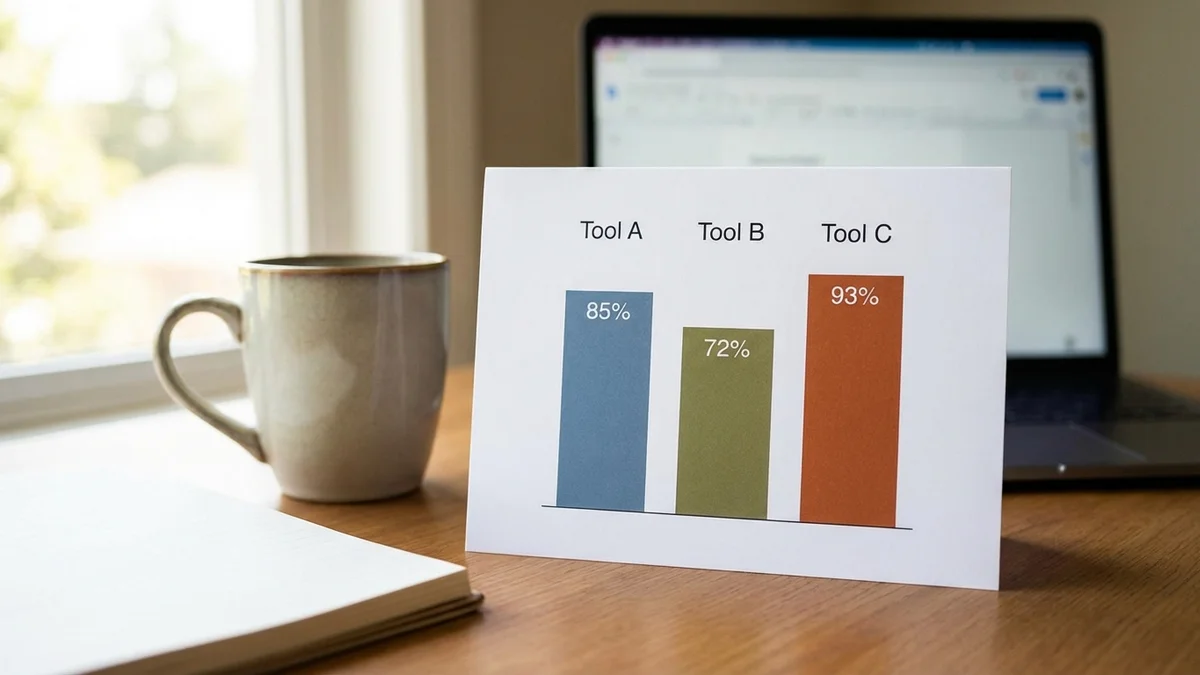

The full comparison across every metric that counts:

| Metric | llama.cpp | Ollama | LM Studio |

|---|---|---|---|

| Generation Speed (GPU) | Fastest | 8–15% slower | 2–5% slower |

| Prompt Processing | Fastest | 5–10% slower | 3–7% slower |

| Time-to-First-Token (warm) | Manual | Fastest (daemon) | Fast |

| Memory Overhead | Minimal (~75 MB) | ~200–400 MB | ~300–500 MB |

| Setup Complexity | Highest | Lowest | Low |

Understanding the speed gaps requires looking at what's under the hood.

llama.cpp is the raw inference engine built by Georgi Gerganov. It's a C/C++ library that loads GGUF model files and runs inference through optimized kernels for CPU (AVX2, ARM NEON) and GPU (CUDA, Metal, Vulkan, ROCm). When you run llama-cli or llama-server directly, you're getting as close to the metal as possible without writing custom CUDA kernels.

Ollama wraps llama.cpp in a Go-based server with a REST API. It manages model downloads, handles GGUF file storage, and runs a background daemon that keeps models loaded in memory. The Go HTTP layer adds overhead per request, and Ollama's default inference parameters don't always match llama.cpp's most aggressive settings.

LM Studio packages llama.cpp into a desktop app with a chat interface and a local API server. On Apple Silicon Macs, it also offers Apple's MLX runtime as an alternative to llama.cpp for compatible models. It's closer to llama.cpp in raw performance because its inference wrapper is thinner — but the desktop shell does consume additional system RAM.

As of April 8, 2026, all three runners support NVIDIA CUDA, Apple Metal, and AMD ROCm for GPU acceleration.

When reading the same GGUF file, all three tools run the same quantization math through the same underlying kernels. The speed differences come from wrapper overhead and default configurations.

Community benchmarks — the data we're drawing from here — follow a consistent approach: run the same GGUF model file across all three runners on identical hardware, measure tokens per second for both prompt processing and generation, and average across multiple runs to smooth out variance.

The standard test models include Llama 3.1 8B and Llama 3.1 70B in Q4_K_M quantization (the sweet spot between quality and speed). If you need model recommendations, check our list of the best GGUF models to run locally. Llama 3.3 and early Llama 4 quantizations are now appearing in community tests too, though 3.1 remains the most widely benchmarked baseline because virtually everyone has a Q4_K_M copy sitting on their drive.

Hardware varies wildly across community tests, but the relative performance between runners stays remarkably stable. Whether you're running an RTX 3060 or a 4090, llama.cpp raw tends to win by similar percentages. That consistency is what makes percentage comparisons more useful than raw tok/s figures tied to specific GPUs.

Token generation speed is the metric everyone fixates on — how fast text appears on screen after the prompt is processed.

On NVIDIA GPUs with full CUDA layer offloading, the gaps hold consistent across model sizes:

| Model (Q4_K_M) | llama.cpp | Ollama | LM Studio |

|---|---|---|---|

| Llama 3.1 8B | Baseline (100%) | 88–92% | 96–98% |

| Llama 3.1 70B | Baseline (100%) | 85–90% | 95–97% |

| Mistral 7B | Baseline (100%) | 89–93% | 96–98% |

| Qwen2.5 32B | Baseline (100%) | 87–91% | 94–97% |

The pattern is obvious. llama.cpp wins every round. LM Studio stays close because its inference path has minimal wrapping. Ollama's Go HTTP server and default parameter choices create the widest gap.

But on an RTX 4090, that 10% difference on an 8B model is the gap between roughly 120 tok/s and 108 tok/s. You literally can't read that fast. Both feel instant. The penalty only stings with larger models on constrained hardware, or when you're serving dozens of concurrent requests.

The relative gaps shrink when running purely on CPU, because the bottleneck shifts to memory bandwidth and SIMD operations:

| Model (Q4_K_M) | llama.cpp | Ollama | LM Studio |

|---|---|---|---|

| Llama 3.1 8B | Baseline (100%) | 92–95% | 97–99% |

| Mistral 7B | Baseline (100%) | 93–96% | 97–99% |

When your CPU is the bottleneck, the HTTP server overhead matters less proportionally. The inference math itself takes so much longer that the wrapper tax turns into noise.

Prompt processing (prompt eval) determines how fast the runner chews through your input before generating the first output token. This matters a lot for long context windows and RAG applications where you're stuffing thousands of tokens into each request.

llama.cpp leads here too, but the margins are tighter. Prompt processing is more compute-bound and less sensitive to server overhead. Ollama typically runs 5–10% behind llama.cpp, with LM Studio 3–7% behind.

For RAG workloads stuffing 4,000+ tokens of context into every query, that 5–10% prompt processing gap adds up across hundreds of daily requests. Shave 50ms per query and you've saved real time at scale.

VRAM usage for model weights is identical across all three runners — a Q4_K_M Llama 3.1 8B occupies roughly 4.5 GB regardless. But system RAM overhead tells a different story:

Ollama's latest releases have tightened up memory management considerably compared to earlier versions that were noticeably hungrier.

For VRAM specifically: a Q4_K_M 70B model needs roughly 40 GB. That means dual RTX 3090s or a single RTX 4090 with some creative layer splitting. None of the three runners change this fundamental math — VRAM is VRAM.

Here's where the benchmark story gets interesting, because Ollama actually wins a category.

Cold start (model not in memory): All three take roughly the same time to load weights from disk into VRAM — it's limited by SSD throughput and memory allocation, not wrapper code. Expect 2–5 seconds for an 8B model on a fast NVMe drive.

Warm queries (model already loaded): Ollama's daemon keeps the model resident between requests automatically. Send a second query and there's zero load time. llama.cpp in server mode behaves the same way, but you manage the process yourself. LM Studio keeps models loaded while the app runs.

For back-and-forth conversations, Ollama feels snappier because it handles the model lifecycle without you thinking about it. That's a real usability win that doesn't show up in tok/s benchmarks.

So why does Ollama trail by 8–15% for generation?

Three reasons:

LM Studio's smaller gap (2–5%) comes mostly from its API server overhead when accessed programmatically. The built-in chat UI bypasses the HTTP layer entirely, closing the gap to near-zero.

Want to speed up Ollama? Set

num_gpulayers explicitly, increasenum_batch, and setnum_ctxto only what you need. Don't leave context at 4096 when your prompts are 200 tokens.

Choose llama.cpp if you want maximum throughput, you're building production inference pipelines, or you need granular control over every parameter. Curious about new challengers? Krasis claims 10x faster inference than llama.cpp. It's the right call for batch processing, performance-critical deployments, and anyone comfortable with command-line flags.

Choose Ollama if you want the easiest setup, you're building apps that call a local LLM via API, or you value model management over raw speed. For a full feature-by-feature breakdown, see our Ollama vs LM Studio comparison. One ollama run llama3.1 command and you're generating text. The speed penalty buys serious convenience.

Choose LM Studio if you want a visual interface for experimenting with models, you frequently switch between quantizations, or you need both a chat UI and an OpenAI-compatible API endpoint. It hits the sweet spot between speed and usability.

And honestly? For most people reading this, the performance gap won't matter day-to-day. If you're chatting with a 7B model on a modern GPU, all three runners spit out text faster than you can process it. The gap becomes meaningful only with larger models on limited hardware, batch processing workflows, or high-throughput API serving.

llama.cpp is fastest. Period. It wins every generation speed benchmark by a consistent margin because nothing sits between your hardware and the inference math.

But "fastest" and "best" aren't synonyms. Ollama's ecosystem — its model library, one-line CLI, and daemon architecture — makes it the most practical choice for the vast majority of local LLM users. LM Studio splits the difference with near-llama.cpp speeds and a polished desktop experience.

Pick your priority: raw throughput, developer convenience, or visual exploration. The models and the quantization math are identical no matter which wrapper you choose.

Sources

Yes. All three runners use the GGUF format, so a single downloaded model file works everywhere. Ollama stores models in its own directory structure (usually ~/.ollama/models), but you can point LM Studio and llama.cpp at any GGUF file directly. If you download a model through Ollama, you can locate the blob file and use it in the other runners without redownloading.

Yes. Ollama supports AMD GPUs through ROCm on both Linux and Windows, though Linux has broader coverage. On Linux, supported cards span the RX 5000 series through the RX 9000 series, plus Radeon PRO W, Ryzen AI, and Instinct accelerators. Windows ROCm support currently covers the RX 6000 and 7000 series plus select Radeon PRO cards. Performance on AMD GPUs is generally behind equivalent NVIDIA cards due to less mature kernel optimization in llama.cpp's HIP/ROCm backend, though the gap has been narrowing.

At Q4_K_M quantization, a 70B model requires approximately 40 GB of VRAM for full GPU offloading. That means you need either an RTX 4090 (24 GB) with partial CPU offloading, dual RTX 3090s (48 GB total), or a professional card like the A6000 (48 GB). You can also split layers between GPU and CPU RAM, trading speed for accessibility — expect roughly 3–5x slower generation on CPU-offloaded layers.

Yes, but watch for port conflicts and VRAM contention. Ollama defaults to port 11434 and LM Studio uses port 1234 for its API server, so they won't clash on networking. However, if both try to load models into the same GPU, you'll run out of VRAM fast. Unload models from one runner before loading in the other, or assign them to different GPUs if you have multiple cards.

Three key tweaks help significantly. First, set num_gpu to your total layer count to ensure full GPU offloading. Second, increase num_batch (try 1024 or 2048) if your VRAM allows it — higher batch sizes improve prompt processing throughput. Third, reduce num_ctx to match your actual prompt sizes instead of leaving it at the default 4096. These changes can recover a meaningful portion of the performance gap, bringing Ollama closer to raw llama.cpp speeds in most scenarios.