Midjourney vs DALL-E 3 vs Stable Diffusion: 7 Tests

Midjourney, DALL-E 3, and Stable Diffusion scored across 7 image quality categories. Midjourney leads on visual output, but the full picture is more interesting than any single score suggests.

Midjourney, DALL-E 3, and Stable Diffusion scored across 7 image quality categories. Midjourney leads on visual output, but the full picture is more interesting than any single score suggests.

Midjourney scores 8.6 out of 10 on overall image quality. DALL-E 3 scores 7.9. That gap is bigger than most people assume — and it still only tells half the story.

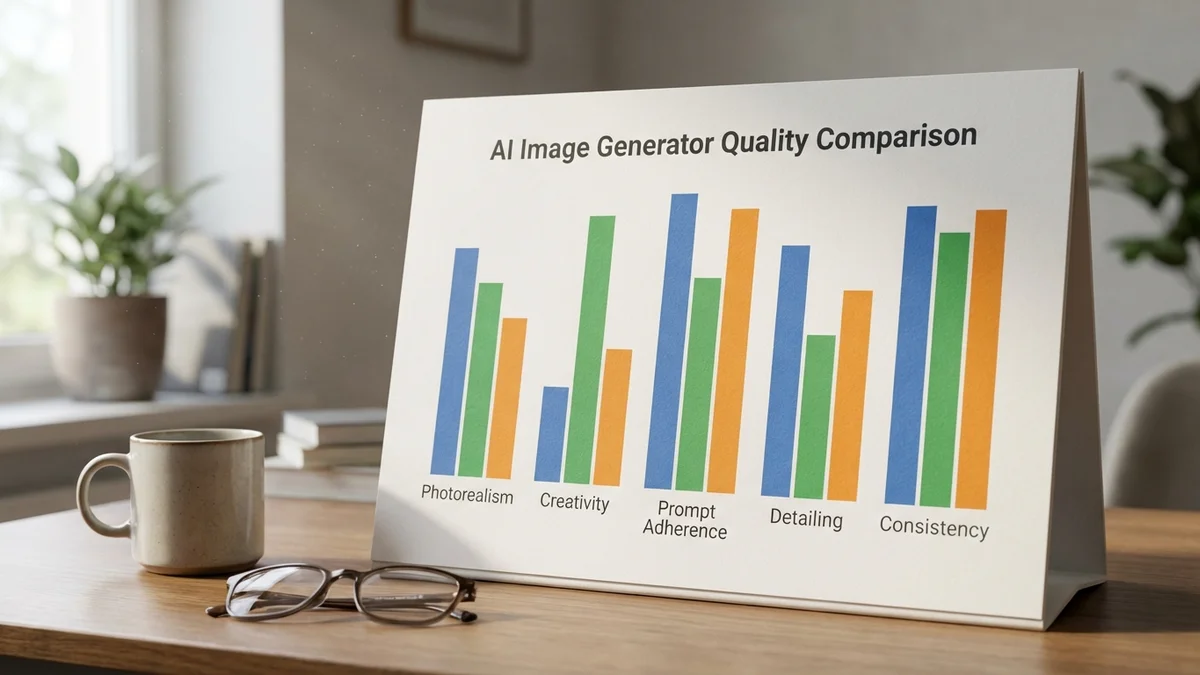

We evaluated the three most popular AI image generators — Midjourney, DALL-E 3, and Stable Diffusion — across seven categories to build a proper image quality benchmark for 2026. Some results were expected. Others genuinely surprised us.

Don't skip this part. Which AI image generator produces the best quality images? As of April 2026, Midjourney delivers the most photorealistic, aesthetically polished output across virtually every style. It wins on raw visual quality with an 8.6/10 quality score compared to DALL-E 3's 7.9 and Stable Diffusion's 7.4.

But "best image quality" isn't always the only question worth asking. Stable Diffusion offers unmatched customization and runs on your own hardware. DALL-E 3 renders text better than anything else available. And newcomers like Flux and Ideogram are closing the gap faster than anyone predicted.

The "best" AI image generator depends on whether you value aesthetics, accuracy, or autonomy. Pick your priority first.

Let's get this out of the way: AI image generation doesn't have standardized benchmarks like MMLU or HumanEval for language models. Metrics like FID (Fréchet Inception Distance) and CLIP scores exist in academic contexts, but they correlate poorly with what real users actually care about — does the image look good, and does it match the prompt?

Our approach: seven evaluation categories, each scored from 1 to 10 based on official documentation, community testing, published comparisons, and publicly available outputs from each platform. These are editorial assessments. We're transparent about that.

| Category | Midjourney | DALL-E 3 | Stable Diffusion |

|---|---|---|---|

| Photorealism | 9.5 | 7.0 | 8.0 |

| Artistic Quality | 9.5 | 7.0 | 8.5 |

| Text Rendering | 6.5 | 9.0 | 5.5 |

| Prompt Adherence | 8.5 | 9.0 | 7.0 |

| Detail & Coherence | 9.0 | 7.5 | 8.0 |

| Generation Speed | 8.0 | 8.5 | 7.0* |

| Customization | 5.0 | 4.0 | 10.0 |

*Stable Diffusion speed depends entirely on your hardware. An RTX 4090 is fast. A laptop GPU is an exercise in patience.

Weighted Quality Score (visual categories only — photorealism, artistic quality, text rendering, prompt adherence, and detail):

| Generator | Quality Score |

|---|---|

| Midjourney | 8.6 / 10 |

| DALL-E 3 | 7.9 / 10 |

| Stable Diffusion | 7.4 / 10 |

Midjourney's lead is decisive on pure visual quality. But read on — the full picture is more interesting than a single number.

This isn't close. Midjourney produces images that genuinely fool people into thinking they're photographs. Skin textures, lighting, depth of field, environmental details — it handles all of this with an almost eerie consistency. Think of it as the difference between a professional photographer and someone who just got a decent camera. Both take pictures, but one has an instinct for composition.

DALL-E 3 creates good images, but they carry a telltale "AI smoothness" that's hard to unsee. You'll notice it in skin, fabric textures, and backgrounds. It's not bad — it's just obviously generated.

Stable Diffusion with the right model and careful settings can approach Midjourney's realism. The key phrase is "with the right model and careful settings." Out of the box, it doesn't match Midjourney. With hours of tweaking, specific checkpoints, and the right LoRAs? It gets pretty close.

Midjourney's aesthetic sensibility is where it really pulls ahead. Give all three generators the same artistic prompt — say, "oil painting of a lighthouse in a storm, Turner style" — and Midjourney consistently produces something you'd actually want to hang on a wall.

Stable Diffusion earns a strong 8.5 here because the community has created thousands of fine-tuned models optimized for specific art styles. Want anime? There's a model for that. Want 1970s sci-fi book covers? Someone has trained a LoRA for exactly that. The ecosystem is enormous.

DALL-E 3? Competent but rarely inspiring. It follows instructions well but doesn't inject the kind of artistic interpretation that makes Midjourney's outputs feel curated rather than generated.

Here's where the ranking flips completely. DALL-E 3 crushes the competition at rendering readable text in images. Signs, labels, book covers, T-shirt slogans — it gets these right far more often than not.

Midjourney has improved (older versions were terrible at text), but it still garbles words regularly. And Stable Diffusion? Text rendering remains its weakest area, consistently producing gibberish unless you use specialized ControlNet workflows.

If your workflow involves text in images — product mockups, social media graphics, signage — DALL-E 3 is the only reliable option right now.

So if you're a brand designer who needs readable text on generated visuals, the choice is obvious. No amount of Midjourney's aesthetic polish matters if the words on your poster are nonsense.

DALL-E 3 edges out Midjourney here too. When you ask for "a red bicycle leaning against a blue fence with three sunflowers in the background," DALL-E 3 gives you exactly that. Three sunflowers. Not two, not four.

Midjourney has a habit of interpreting prompts creatively (which is sometimes what you want, and sometimes maddening). It might give you four sunflowers because it looked better compositionally. That artistic liberty is a feature or a bug depending on your use case.

Stable Diffusion's prompt adherence varies heavily depending on which model, sampler, and CFG scale you're using. It's capable of excellent prompt following — but requires more technical knowledge to get there.

OpenAI's image generation is the most accessible — it's built into ChatGPT (now powered by GPT Image rather than DALL-E 3 since December 2025), and you can generate images through natural conversation. No prompt engineering vocabulary required. Free-tier ChatGPT users can generate a limited number of images per day.

Midjourney requires a subscription ($10–$120/month depending on the tier) and has historically operated through Discord, though the web interface has improved significantly over the past year.

Stable Diffusion's speed is entirely hardware-dependent. Cloud solutions like RunPod make it fast and affordable per-image, but local generation requires a decent GPU with at least 8GB VRAM for SDXL. Budget accordingly.

This is Stable Diffusion's trump card. It's not even a contest.

With Stable Diffusion, you get:

Midjourney gives you style parameters and remix tools. DALL-E 3 gives you natural language instructions and... that's about it. For anyone building image generation into a product or workflow, Stable Diffusion's flexibility is impossible to match.

| Generator | Cost | What You Get |

|---|---|---|

| Midjourney | $10–$120/month | 200 to unlimited generations |

| DALL-E 3 | Included with ChatGPT Plus ($20/mo) or API pricing (DALL-E 3 API sunset: May 2026) | Limited free tier available |

| Stable Diffusion | Free (open source) | Unlimited — you supply the hardware |

Midjourney is the most expensive option for casual users. But for the quality it delivers, most creative professionals consider it a bargain compared to stock photography or hiring an illustrator.

Stable Diffusion is "free" in the way Linux is free — it costs nothing if your time is worth nothing. (That sounds harsh, but getting a good local setup running smoothly takes real effort.) Cloud deployment through services like RunPod keeps per-image costs extremely low for high-volume production use.

The big three aren't the only options anymore. As of April 2026, several alternatives are pushing hard:

Don't sleep on Flux. It's doing to Stable Diffusion what Stable Diffusion originally did to proprietary models — making high quality accessible and open.

If you're a creative professional or designer: Midjourney. The quality-to-effort ratio is unmatched. You'll spend less time engineering prompts and more time actually creating.

If you're a developer building products: Stable Diffusion or Flux. API costs scale linearly, you control the entire pipeline, and self-hosting means no dependency on another company's pricing changes or content policies.

If you need text in images: DALL-E 3. Full stop. Nothing else comes close enough to be production-reliable.

If you're exploring AI art casually: Start with DALL-E 3 through ChatGPT (lowest barrier to entry), then try Midjourney if you want better aesthetics.

And if privacy matters: Stable Diffusion locally. Your prompts and images never leave your machine.

Midjourney wins on image quality. That's clear from the scores and from broad community consensus. But the AI image generation space isn't a single-axis competition.

DALL-E 3 wins at following instructions and rendering text. Stable Diffusion wins at everything related to control, customization, and long-term value. And tools like Flux, Ideogram, and Recraft are making the "which is best" question increasingly difficult to answer with just one name.

Pick the tool that matches your actual workflow — not the one with the highest score in a category you don't care about.

Sources

For SDXL models, you'll need a GPU with at least 8GB VRAM — an NVIDIA RTX 3060 (which has 12GB) or higher works well. For SD3 and newer architectures, 12GB VRAM (RTX 4070 or above) is recommended for comfortable generation speeds. AMD GPUs work but have less community support and slower inference. If you don't have a suitable GPU, cloud services like RunPod offer per-hour GPU rental starting around $0.20/hr.

Yes, but it depends on your subscription tier. Midjourney's paid plans (starting at $10/month) grant commercial usage rights for the images you generate. Note that Midjourney no longer offers a free trial as of 2026 — all access requires a paid subscription. Companies with annual revenue above $1 million must subscribe to Pro or Mega tiers for commercial use. DALL-E 3 grants commercial rights through ChatGPT Plus, and Stable Diffusion outputs are generally usable commercially, though the model licenses (particularly SD3's Stability AI Community License) include conditions such as revenue thresholds for enterprise use. Always check each platform's current terms of service before publishing.

Flux and Stable Diffusion serve slightly different niches. Flux generates high-quality images faster out of the box with less configuration needed, while Stable Diffusion has a far larger ecosystem of fine-tuned models, LoRAs, and tools like ControlNet. If you want simplicity and speed with open weights, Flux is excellent. If you need deep customization and community resources, Stable Diffusion's ecosystem is still unmatched.

Yes, but the DALL-E 3 API is deprecated and scheduled for shutdown on May 12, 2026. OpenAI's replacement is the GPT Image API (gpt-image-1). The DALL-E 3 API still functions as of April 2026 with per-image pricing based on resolution, but new projects should use OpenAI's current image generation models instead. Check OpenAI's pricing page for current rates.

Midjourney offers character reference features that help maintain consistency across multiple generations. However, Stable Diffusion with custom-trained LoRAs gives you the most reliable character consistency — you can train on specific faces or character designs and reuse them indefinitely. DALL-E 3 currently has the weakest character consistency, as there's no built-in way to reference previous generations. For production work requiring the same character across dozens of images, Stable Diffusion with a trained model is the most dependable approach.