A $500 GPU Just Beat Claude Sonnet at Coding Tasks

ATLAS, a source-available AI system built by a Virginia Tech student, scores 74.6% on LiveCodeBench using a single $500 consumer GPU — outperforming Claude Sonnet's 71.4% at roughly $0.004 per task.

ATLAS, a source-available AI system built by a Virginia Tech student, scores 74.6% on LiveCodeBench using a single $500 consumer GPU — outperforming Claude Sonnet's 71.4% at roughly $0.004 per task.

A computer science student just built an AI coding system (released under a source-available license) that scores 74.6% on LiveCodeBench — beating Claude Sonnet's 71.4% — using nothing but a single $500 consumer GPU. No cloud infrastructure. No API costs. About $0.004 per task in electricity.

The system is called ATLAS (Adaptive Test-time Learning and Autonomous Specialization), and it represents a growing challenge to the assumption that you need billion-dollar data centers to compete on AI coding benchmarks. Built by the ATLAS creator, the system wraps a frozen 14-billion parameter model in a smart three-phase pipeline that nearly doubles the base model's performance.

And it runs on hardware you could buy at Best Buy.

ATLAS is a source-available AI coding system that achieves 74.6% pass@1 on LiveCodeBench v5 (599 problems) while running entirely on consumer hardware. It outperforms Claude Sonnet's reported 71.4% on LiveCodeBench by wrapping a small Qwen3-14B model in a multi-phase pipeline — generation, scoring, and repair — rather than relying on raw model size or expensive cloud compute.

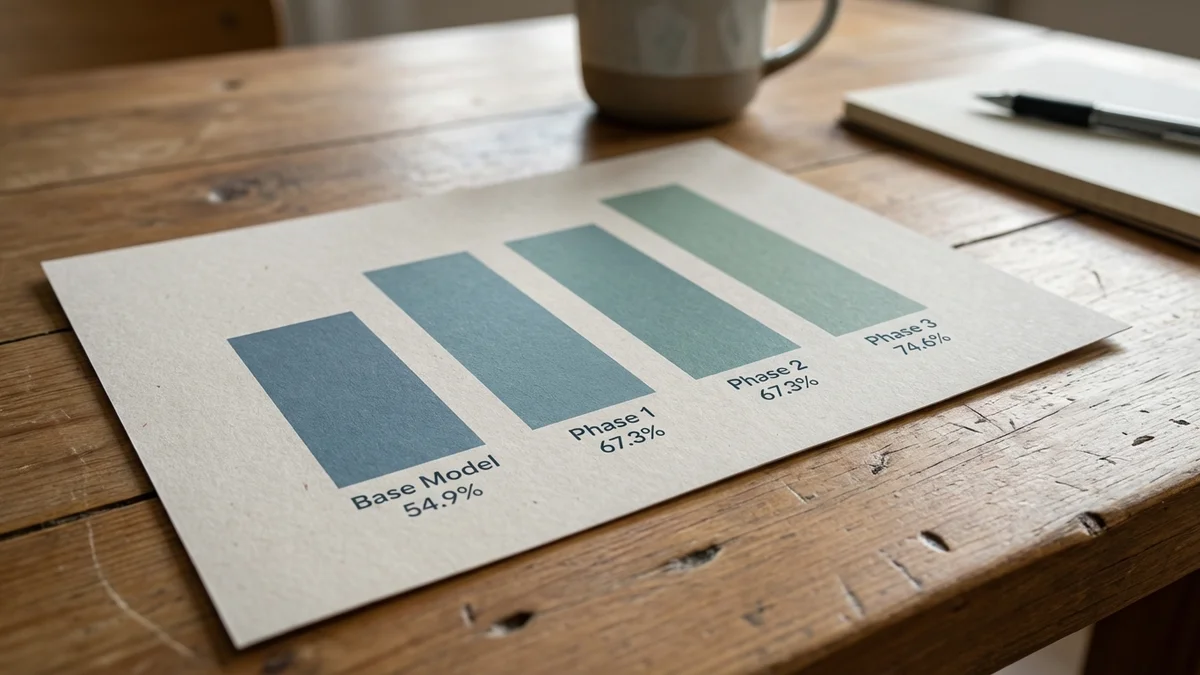

The important distinction: ATLAS isn't a new foundation model. It's a system. The base model it uses (Qwen3-14B-Q4_K_M, frozen and quantized) scores only about 54.9% on its own. The pipeline adds nearly 20 percentage points through smarter inference — not through training a bigger model on more data.

That's like taking a Honda Civic engine and building a race car around it that beats a Porsche on a specific track.

Let's get into the data. Here's what ATLAS achieves across benchmarks, as of March 2026:

| Benchmark | ATLAS (V3 Pipeline) | Base Model Alone | Improvement |

|---|---|---|---|

| LiveCodeBench v5 (599 tasks) | 74.6% pass@1 | 54.9% | +19.7pp |

| GPQA Diamond (198 tasks) | 47.0% | N/A | N/A |

| SciCode sub-problems (341 tasks) | 14.7% | N/A | N/A |

And here's the LiveCodeBench comparison that lit up r/artificial:

| System | LiveCodeBench v5 | Hardware | Approx. Cost/Task |

|---|---|---|---|

| ATLAS (Qwen3-14B) | 74.6% | RTX 5060 Ti 16GB | ~$0.004 |

| Claude Sonnet 4.5 | 71.4% | Cloud API | Variable |

A couple of important caveats here. The Claude Sonnet comparison is against version 4.5, not the current Sonnet 4.6 available as of March 2026. And ATLAS uses a best-of-3 generation approach with iterative repair — a fundamentally different methodology than single-shot inference. Additionally, these scores come from different evaluation sets — ATLAS was tested on 599 LiveCodeBench problems, while the Claude Sonnet 4.5 score (71.4%) comes from Artificial Analysis’s evaluation on 315 LiveCodeBench problems, making this a directional comparison rather than a controlled head-to-head. All three caveats matter when you're interpreting the headline number.

The base model — Qwen3-14B-Q4_K_M — is frozen and quantized. No fine-tuning whatsoever. On its own, it's a perfectly ordinary small model. So how does the pipeline nearly double its coding performance?

ATLAS uses Plan Search, a system that extracts constraints from each problem and generates diverse solution strategies. For every coding task, it creates k=3 candidate solutions using different approaches. Budget Forcing controls how many thinking tokens the model allocates — preventing wasted compute on easy problems while giving hard ones more room to breathe.

This phase alone bumps performance from 54.9% to 67.3%. That's a massive jump just from asking the model to think more carefully and try multiple angles.

Now it gets weird. ATLAS uses an energy-based scoring system called Geometric Lens that evaluates candidates using 5120-dimensional self-embeddings. Think of it as the system developing a mathematical intuition for which solutions "feel" right based on their internal geometry. The Lens reports 87.8% accuracy on mixed-result tasks.

But the ablation data tells a surprising story: this phase adds exactly 0.0 percentage points to the aggregate score. The author's own numbers show it doesn't move the needle overall (though it likely helps in specific edge cases where routing matters). Credit to Tigges for publishing the ablation honestly — a lot of researchers would quietly omit that.

This is where ATLAS earns its final score. When candidates fail, the system generates its own test cases, then uses PR-CoT (multi-perspective chain-of-thought) repair to fix broken solutions.

The repair phase rescues 85.7% of failed tasks — 36 out of 42 in testing. That adds another 7.3 percentage points, pushing the final score to 74.6%.

The base model scores 55%. The pipeline adds nearly 20 points. That's not a better model — it's a better system.

One of the most striking aspects of ATLAS is what you actually need to run it:

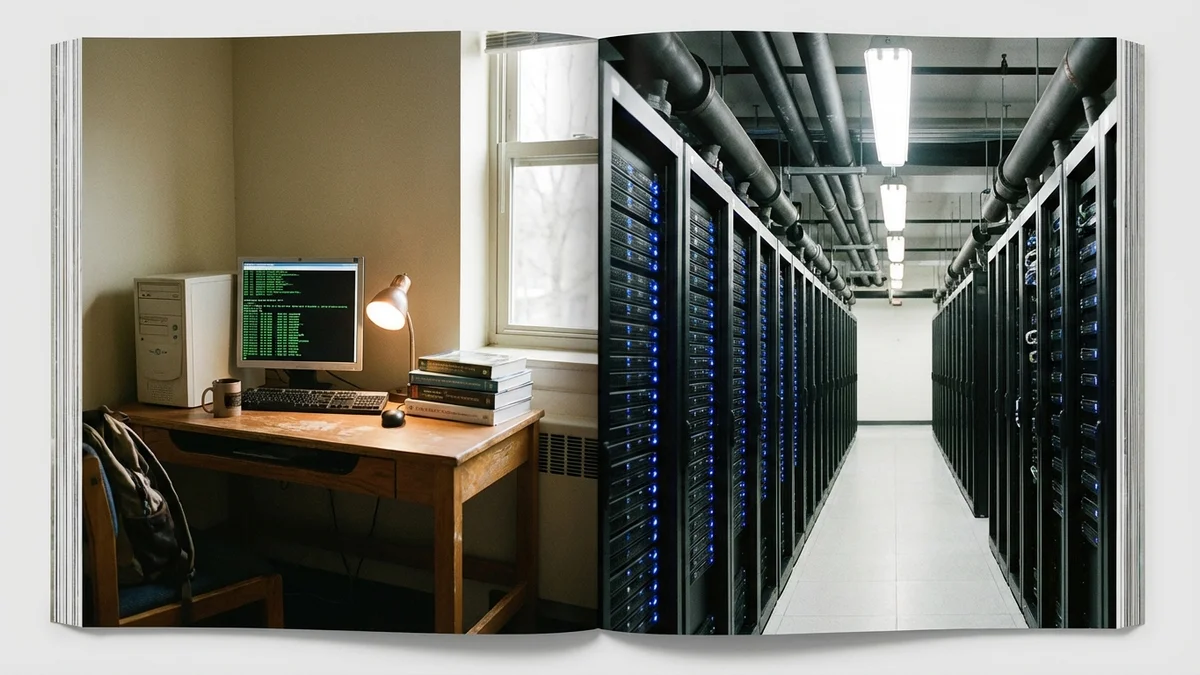

The tested configuration runs on a Proxmox VM with VFIO GPU passthrough. Speculative decoding pushes throughput to about 100 tokens per second. That's a setup you could build in a dorm room. (Which is quite literally what happened.)

Total hardware cost: roughly $500 for the GPU, plus whatever PC you already have sitting around.

Worth flagging: let's talk economics, because this is where the story gets genuinely interesting.

| Factor | ATLAS (Local) | Cloud API (Sonnet 4.6) |

|---|---|---|

| Per-task cost | ~$0.004 (electricity) | $3/$15 per MTok (input/output) |

| Hardware investment | ~$500 one-time | $0 |

| Ongoing costs | Electricity only | Pay-per-token |

| Data privacy | Fully local | Sent to cloud |

As of March 2026, Anthropic prices Claude Sonnet 4.6 at $3 per million input tokens and $15 per million output tokens. For a developer running hundreds of coding tasks daily, ATLAS's $0.004 per task starts looking very attractive — you'd recoup the $500 GPU investment quickly.

But there's a catch (there's always a catch). ATLAS takes considerably longer per task than a single API call. You're running a three-phase pipeline with three candidate generations plus repair loops on consumer hardware at 100 tok/s. A cloud API call to Sonnet returns in seconds. Speed matters when you're in the middle of a coding session.

Let's be clear about what ATLAS proves — and what it doesn't.

What it proves: Smart infrastructure around small models can compete with larger commercial systems on specific benchmarks. The gap between local AI and cloud AI is closing from the bottom up. A frozen 14B quantized model with the right pipeline can outperform a frontier model on competitive coding tasks.

What it doesn't prove: That you should cancel your AI subscriptions. LiveCodeBench is one benchmark measuring one type of capability. ATLAS scored 47% on GPQA Diamond — as of March 2026, Claude Opus 4 scored 79.6% on that same test. On SciCode, ATLAS manages just 14.7%. The system is specifically optimized for competitive coding problems with clear pass/fail criteria, not general-purpose reasoning or open-ended tasks. (For more on how benchmarks can be misleading, see our CRYSTAL benchmark analysis.)

ATLAS is a specialist that beats a generalist on the specialist's home turf. That's impressive — but it's not a replacement for a full-stack AI assistant.

And the comparison against Claude Sonnet 4.5 (not the current 4.6) is worth keeping in mind. The benchmark gap may have narrowed or even reversed with the newer model.

Here's what makes ATLAS genuinely significant, beyond the headline benchmark score.

The AI industry has been on a "bigger is better" trajectory for years. More parameters. More GPUs. More data centers. OpenAI, Google, and Anthropic are collectively spending tens of billions on compute infrastructure. And ATLAS — built by a single college student on a consumer GPU — suggests that brute-force scaling isn't the only viable path.

This fits a broader pattern in open-source AI. DeepSeek demonstrated that clever architecture can compete with massive compute budgets. Now ATLAS shows that smart systems design can achieve the same thing at the inference layer. The open-source community is finding ways to squeeze performance — as seen in Nous Coder-14B's recent benchmark results — out of models that the big labs have already moved past.

The most interesting competition in AI right now isn't between companies with the biggest GPU clusters. It's between raw scale and smart engineering.

But let's keep perspective. ATLAS works well because LiveCodeBench problems have clear pass/fail criteria — you can generate multiple solutions, test them, and pick the winner. That generate-test-select approach doesn't transfer cleanly to open-ended tasks like writing analysis, holding conversations, or reasoning about ambiguous problems. Code is uniquely testable, which makes it uniquely suited to this kind of pipeline optimization.

The V3.1 roadmap includes some ambitious targets:

Whether you'd use ATLAS over tools like Cursor, Claude Code, or Aider for daily development work is a different question. Right now, those tools offer a much smoother developer experience with broader capabilities. But as a proof of concept for where local AI coding is headed, ATLAS is the most impressive thing to come out of a dorm room in a while.

ATLAS scored 74.6% on LiveCodeBench v5 using a $500 consumer GPU and a frozen 14B model, beating Claude Sonnet 4.5's reported 71.4% on LiveCodeBench. The cost per task: about four-tenths of a penny.

It won't replace your AI coding tools tomorrow. But it proves that smart systems design can close the gap between a $500 GPU and a billion-dollar infrastructure — at least on the benchmarks where it matters most.

Sources

As of March 2026, ATLAS has only been tested on NVIDIA hardware (specifically the RTX 5060 Ti 16GB). The system relies on llama.cpp via llama-server, which does support AMD ROCm GPUs in theory, but the ATLAS documentation only lists NVIDIA as tested. If you have an AMD card with 16GB+ VRAM (like the RX 7800 XT), it may work but expect to troubleshoot compatibility issues.

ATLAS runs at about 100 tokens per second with speculative decoding on the RTX 5060 Ti. Since it generates 3 candidate solutions plus runs repair loops on failures, a single task can take several minutes — significantly slower than a cloud API call that returns in seconds. The trade-off is cost ($0.004/task vs. pay-per-token API pricing) and full data privacy.

ATLAS is currently benchmarked and optimized for competitive coding problems (LiveCodeBench) with clear pass/fail test cases. Its generate-test-repair pipeline relies on being able to verify solutions programmatically. For real-world software engineering tasks like debugging, refactoring, or building features — where success criteria are ambiguous — tools like Cursor, Claude Code, or Aider are still better options.

ATLAS is released under the 'A.T.L.A.S Source Available License v1.0,' which is not a standard open-source license (like MIT or Apache 2.0). Source-available means you can view and modify the code, but there may be restrictions on commercial use or redistribution. Check the license file in the GitHub repository carefully before building anything commercial on top of it.

The V3.1 roadmap plans to swap the current Qwen3-14B base model for Qwen3.5-9B with DeltaNet architecture. A smaller model means faster inference and lower VRAM requirements — potentially running on 8GB GPUs. The target is 80-90% Geometric Lens routing accuracy and expanded benchmark coverage beyond LiveCodeBench to test whether the pipeline generalizes to other coding tasks.