8 Best Vector Databases for AI in 2026, Ranked

We tested and ranked the top 8 vector databases for AI applications in 2026 — from managed solutions like Pinecone to open-source options like Qdrant and pgvector.

We tested and ranked the top 8 vector databases for AI applications in 2026 — from managed solutions like Pinecone to open-source options like Qdrant and pgvector.

How many vector databases does a developer need to evaluate before picking one? If you've spent any time building RAG pipelines or semantic search features, you already know the answer: too many.

The vector database market has exploded. What started as a niche tool for ML engineers has become essential infrastructure for anyone building AI applications — from chatbots to recommendation engines to document search. And with that growth came a flood of options, some excellent, some overhyped, and a few that are genuinely category-defining.

The best vector databases for AI applications in 2026 are Pinecone for managed deployments, Qdrant for open-source performance, and pgvector for teams already running PostgreSQL. But the right pick depends entirely on your scale, budget, and existing stack.

We spent weeks testing and comparing the most popular vector databases for real AI workloads. Here are the eight that actually deserve your attention.

| Database | Best For | Open Source | Standout Feature |

|---|---|---|---|

| Pinecone | Managed production deployments | No | Zero-ops serverless |

| Qdrant | Performance-critical open-source apps | Yes | Rust-powered speed |

| pgvector | Teams already on PostgreSQL | Yes | No new infrastructure |

Pinecone remains the most polished managed vector database you can buy. It's the one you pick when you don't want to think about infrastructure and just want vector search to work.

The developer experience is pretty solid. You create an index, push vectors, and query — that's it. No Kubernetes manifests, no capacity planning, no 3 AM pages about disk space.

Key features:

Pricing: Free tier available with limited usage. Serverless pricing is consumption-based — check Pinecone's pricing page for current rates.

Best for: Teams that want zero-ops vector search in production. If your priority is shipping fast rather than managing infrastructure, Pinecone is the obvious pick.

Pinecone trades flexibility for simplicity — and for most production teams, that's exactly the right trade.

The biggest knock on Pinecone is vendor lock-in. While Pinecone now offers BYOC (Bring Your Own Cloud) on AWS, GCP, and Azure — letting you run the data plane in your own cloud account — the control plane remains managed by Pinecone, and you can't fully self-host it. For startups moving fast, that's fine. For enterprises with strict data residency requirements, BYOC helps, but full self-hosting isn't an option.

Written in Rust, Qdrant is fast. Really fast. It consistently outperforms other open-source vector databases in benchmark tests, and its memory efficiency is genuinely impressive at scale.

As of April 3, 2026, Qdrant has become one of the fastest-growing vector databases in the open-source ecosystem, with strong community adoption and a rapid release cadence.

Key features:

Pricing: Free and open source for self-hosting. Qdrant Cloud offers managed clusters — check their site for current tiers.

Best for: Engineers who want open-source with production-grade performance. Qdrant hits the sweet spot between Pinecone's polish and Milvus's raw scalability.

The Rust foundation isn't just marketing — it shows up in real workloads. Memory consumption stays predictable even under heavy concurrent query loads, which matters a lot when you're running on cloud VMs and every GB of RAM costs money.

Look, about pgvector: it's not the fastest vector database. It's not the most feature-rich. But it runs inside PostgreSQL, and that single fact makes it the right choice for a huge number of teams.

Key features:

CREATE EXTENSION vectorPricing: Completely free. It's an open-source PostgreSQL extension. Your only cost is whatever you're already paying to run PostgreSQL.

Best for: Any team already running PostgreSQL that needs vector search without adding another database to their stack. And honestly, that describes most teams.

The best database is the one you're already running. For millions of developers, that's PostgreSQL.

pgvector won't win speed benchmarks against purpose-built vector databases. But for applications with fewer than 10 million vectors, the performance is perfectly adequate — and you get ACID transactions, joins with your relational data, and zero additional operational burden. That's a trade most teams should happily make.

Weaviate takes a different approach. Instead of being purely a vector store, it's designed as a full-featured search engine that happens to be excellent at vectors.

Key features:

Pricing: Open source for self-hosting. Weaviate Cloud Services offers managed deployments — check weaviate.io for pricing details.

Best for: Applications that need both keyword and semantic search. If you're building a search experience where users sometimes type exact product SKUs and sometimes ask natural language questions, Weaviate handles both gracefully.

The built-in vectorization is particularly nice. Instead of managing a separate embedding pipeline, you can let Weaviate handle vectorization on ingest. It adds a dependency, but it dramatically simplifies your architecture — one less microservice to babysit.

If you're working with billions of vectors, Milvus is built for you. Originally created by Zilliz, Milvus is the most battle-tested option for extreme-scale workloads.

Key features:

Pricing: Open source and free for self-hosting. Zilliz Cloud provides a fully managed Milvus service with tiered pricing.

Best for: Large enterprises and applications dealing with hundreds of millions to billions of vectors. Milvus is overkill for a side project, but it's exactly right for serious production scale.

The downside? Milvus has a steep learning curve. The architecture involves multiple components (proxy, query nodes, data nodes, index nodes), and getting a production deployment right takes genuine operational expertise. Zilliz Cloud removes that complexity, but then you're paying for managed infrastructure. So the question becomes: do you have a platform team, or do you want someone else to handle it?

Chroma is the SQLite of vector databases. It's lightweight, embeddable, and gets out of your way completely. You can pip install it and have vector search running in under five minutes.

Key features:

Pricing: Free and open source. Chroma also offers a hosted service — check trychroma.com for details.

Best for: Rapid prototyping, hackathons, and small-scale applications. Chroma is where you start when you're exploring an idea and don't want infrastructure decisions slowing you down.

Chroma is the "just get it working" vector database. And sometimes that's exactly what you need.

But be honest about its limitations. Chroma isn't designed for production workloads with millions of vectors or strict latency SLAs. It's a fantastic starting point, not a finishing line. Many teams prototype with Chroma, then migrate to Qdrant or Pinecone when they're ready to go live. For a detailed comparison, see our Pinecone vs Weaviate vs Chroma breakdown.

LanceDB is the newer player that's earned its spot through a genuinely different architecture. Built on the Lance columnar data format, it's an embedded vector database that works directly with your object storage.

Key features:

Pricing: Open source and free for self-hosting. LanceDB Cloud is available for managed deployments.

Best for: Data teams and ML engineers who want vector search tightly integrated with their data pipelines. The Lance format makes it particularly strong for workloads that mix analytics with similarity search.

As of April 3, 2026, LanceDB has been gaining traction especially among teams building AI applications on top of data lakes and object storage like S3. The serverless model means you're not paying for idle compute, which keeps costs predictable.

Redis includes vector search capabilities as of Redis 8 (previously offered through Redis Stack), and it's a compelling option if you're already in the Redis ecosystem for caching or session management.

Key features:

Pricing: Redis is source-available under a dual RSALv2/SSPLv1 license (not OSI-approved open source). Redis Cloud offers managed deployments with vector search included — check redis.io for current pricing.

Best for: Applications where latency is everything. If you need vector search results in single-digit milliseconds and you're already running Redis, this is a natural fit.

The trade-off is memory cost. Redis stores everything in RAM by default, so large vector collections get expensive quickly. For datasets under a few million vectors where speed is the top priority, though, it's hard to beat. Think real-time recommendations, autocomplete, or fraud detection — places where every millisecond counts.

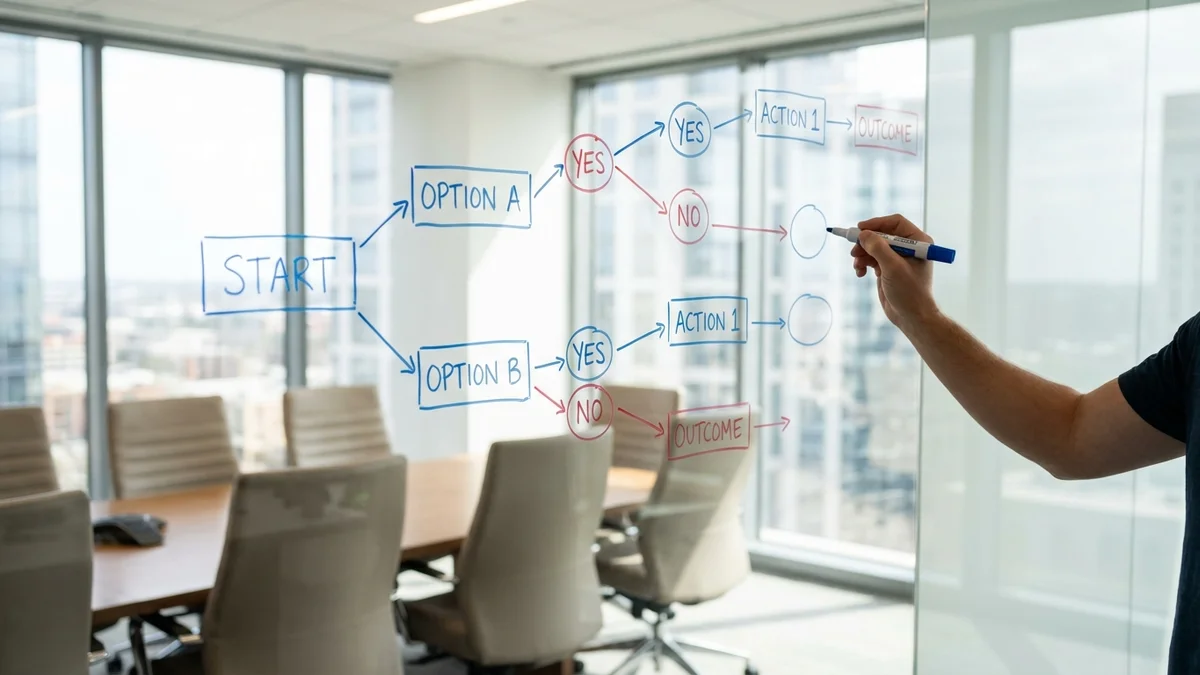

We looked at six factors when building these rankings:

As of April 3, 2026, the gaps between top-tier vector databases are narrowing. Your choice increasingly depends on your specific constraints — existing infrastructure, team expertise, scale requirements — rather than raw technical capability. That's a sign the market is maturing, and it's good news for developers.

Here's the blunt version:

Stop evaluating and start building. The best vector database is the one that gets your AI application into production — not the one with the prettiest benchmark chart.

Sources

Yes, but it's not always straightforward. Most vector databases support exporting vectors as arrays, which you can re-import elsewhere. The catch is that metadata schemas, index configurations, and filtering syntax differ between platforms. Tools like LangChain and LlamaIndex abstract the vector store layer, which makes switching easier if you use them from the start. Budget 1-2 days for a migration under 10 million vectors.

For most applications with under 10 million vectors, pgvector is more than enough. You avoid the operational overhead of running a separate database, and you get the benefit of joining vector search results with your relational data. Switch to a dedicated vector database when you hit consistent query latency issues, need billion-scale capacity, or require advanced features like multi-modal search.

Most vector databases support dimensions ranging from 2 to 4,096 or higher. Common embedding sizes are 384 (MiniLM), 768 (BERT-base), 1,536 (OpenAI text-embedding-ada-002), and 3,072 (OpenAI text-embedding-3-large). Higher dimensions generally improve accuracy but increase storage costs and query latency. Some databases like Qdrant and Milvus support quantization to reduce the memory footprint of high-dimensional vectors.

Costs vary widely. Self-hosting pgvector or Qdrant on a modest cloud VM (8 GB RAM, 4 vCPUs) can run roughly $40-100/month depending on provider and region for 1 million 1,536-dimension vectors. Pinecone's serverless tier costs based on read/write units and storage — light workloads may stay under $50/month. Redis will be more expensive since it stores vectors in RAM. For exact numbers, use each provider's pricing calculator with your specific vector dimensions and query volume.

All eight databases in this list support real-time inserts, updates, and deletes — you don't need to rebuild indexes from scratch. However, performance during heavy writes varies. Pinecone and Redis handle concurrent reads and writes smoothly. Milvus and Qdrant require segment compaction under heavy write loads. pgvector inherits PostgreSQL's MVCC model, so updates are transactional but may trigger index rebuilds for optimal performance.